AI Anomaly Detection in ERP Systems: Case Study

Enterprise finance teams spend thousands of hours each quarter chasing data entry errors that should never have made it past the first screen. Litslink built an AI anomaly-detection assistant that integrates into the ERP workflow and flags deviations as soon as they appear. The result: fewer corrections, faster closes, and financial data your audit team can actually trust.

- → 85%+ reduction in manual anomaly review time

- → ~60–70% drop in false-positive alert rate

- → Detection across 5 ERP modules simultaneously

- → 80%+ faster post-close triage (days → hours)

Project Details

The client’s finance team already knew where their data quality problems lived — they just couldn’t catch them at scale. Manual spot-checks covered 10–15% of transactions. The rest passed through on trust. LITSLINK was brought in to close that gap: design, build, and deploy an AI anomaly detection assistant that could monitor ERP data continuously and flag deviations the moment they appeared.

CLIENT

CLIENT

INDUSTRY

INDUSTRY

SOLUTION

SOLUTION

SERVICE

SERVICE

PLATFORM

PLATFORM

SCOPE

SCOPE

DURATION

DURATION

LOCATION

LOCATION

Business Challenge

If you manage financial data in a large ERP system (SAP, Oracle, Dynamics, etc.), you already know the problem. Somebody enters a figure that is off by an order of magnitude. A vendor code gets miskeyed. A cost center allocation drifts outside its normal range, and nobody notices until the quarterly review. By then, the damage is done: audit flags, rework cycles, and a lot of uncomfortable conversations with controllers who trusted the numbers. AI financial anomaly detection was the only realistic path to keeping pace with the data volume.

The client’s situation was not unusual, but it was getting worse. As transaction volumes grew, the gap between what existing systems could catch and what actually needed catching widened. Four issues, specifically, were driving the decision to look for something better:

Manual anomaly detection

Finance teams were reviewing entries by hand or spot-checking batches — a method that works at a small scale but collapses when you're processing thousands of line items per day. The effort was real; the coverage was not.

Rule-based system limitations

The existing validation logic was hardcoded: fixed thresholds, static ranges. Anything that fell within the rules passed, even if it was clearly anomalous relative to historical behavior. The rules couldn't learn, adapt, or account for seasonal variation.

High false-positive rates

When the team did try to tighten the rules, they ended up flagging too many legitimate entries. Alert fatigue set in. People stopped investigating because most flags were noise, which, paradoxically, allowed real anomalies to pass unnoticed.

Our Solution: AI-Powered Anomaly Detection Assistant

The central question here was simple: how do you tell the difference between “unusual” and “wrong” in financial data without a human sitting behind every transaction?

LITSLINK’s answer was to build a system that doesn’t rely on predefined rules at all. Instead of encoding what’s acceptable, the team built an AI solution that learns what’s normal from the data itself and then surfaces anything that deviates from that learned baseline.

The approach relies on unsupervised machine learning, with no labeled datasets or manual tagging. The algorithm builds its own model of expected behavior for each column, table, and module, and updates it as new data comes in.

This is what separates a genuine AI anomaly detection solution from a dressed-up rule engine. A trained model tells you what the data itself considers unusual right now, whereas rules tell you what someone once thought was wrong. That distinction matters when your business environment is constantly shifting.

AI-Based Anomaly Detection

The core detection layer uses a custom unsupervised learning algorithm that analyzes distribution patterns across ERP columns. It identifies values that significantly deviate from historical observations without requiring any pre-labeled anomaly examples.

Financial Data Monitoring

The system pulls financial data from ERP modules, building a baseline to detect unusual spending patterns, vendor allocations, and cost center activity. It also uses AI to scan financial statements and flag discrepancies across reporting periods.

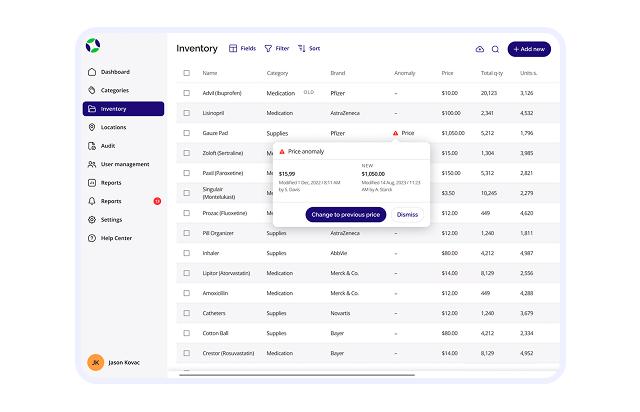

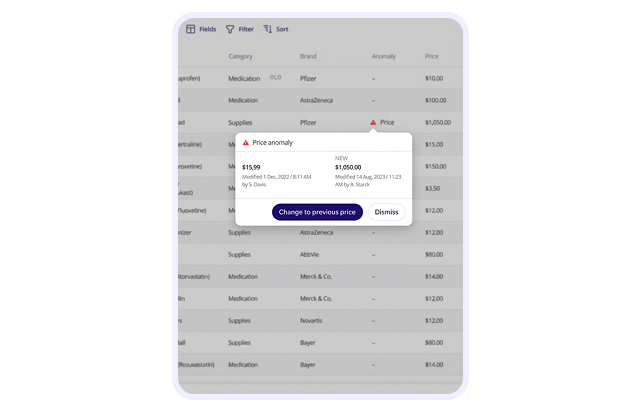

Real-Time Alerts

Detected anomalies are scored by severity and delivered with AI-generated explanations. Instead of a raw flag that says "check this," the system tells the reviewer why a value looks suspicious, what the expected range was, and how far the entry deviates. That context turns a notification into something actionable.

AI Assistant Interface

A conversational layer lets finance users interact with findings naturally — asking questions, drilling into anomalies, and requesting historical comparisons. The system responds with plain-language summaries of what it detected.

ERP Integration

The solution connects directly to SAP, Oracle, Microsoft Dynamics, or custom ERP systems via native interfaces — no middleware or manual exports required. Anomaly detection runs against live operational data in real time.

Scrum Methodology

Project Journey

The project followed a Scrum cadence with short, focused sprints. Early iterations prioritized the detection algorithm itself.

Discovery started with a deep dive into the client’s ERP workflows: which modules generated the most errors, where manual reviews were concentrated, and what patterns the finance team already recognized but couldn’t systematize. That analysis shaped the detection model’s feature set and the UX priorities for the dashboard.

How the AI Anomaly Detection Assistant Works

- ERP data flows in from financial and operational modules through native connectors.

- An AI model builds a behavioral baseline from historical transaction patterns

- System identifies deviations, suspicious patterns, and outlier values in real time.

- Each finding is classified by severity and assigned an explainability score.

- AI-generated explanations are pushed to users via the assistant interface.

- Finance team reviews flagged entries, drills down, and resolves — all in one session.

Scrum Process Flow

AI products evolve through testing and iteration, not a single big launch. Sprint-based delivery meant the client reviewed working features every 1–2 weeks and could redirect priorities before changes became costly.

How We Deliver the AI Anomaly Project

- We map ERP workflows, identify where anomalies cluster, and define the detection scope — modules, data types, and integration points.

- The team builds the unsupervised ML model against your historical data, tuning anomaly coefficients until detection accuracy meets production standards.

- Dashboard wireframes and assistant flows are tested against real anomaly scenarios — before a single line of production code is written.

- Engineering runs in focused 1–2 week cycles. Each sprint delivers working functionality you can review, test, and redirect if needed.

- Detection output is stress-tested against edge cases, false-positive rates are measured, and integration performance is validated across all connected modules.

- The system goes live with post-deployment monitoring. We refine the model as real data flows in and stay on for updates your team needs.

-Timeline

Five phases, clearly defined

Discovery & Product Workshop

- Aligning on use cases and project goals

- Mapping ERP workflow pain points and error hotspots

- Defining anomaly taxonomy and integration architecture

UX Prototyping

- Dashboard wireframes tested against realistic anomaly scenarios

- Conversational assistant flow validated before model training

- Severity scoring UI and drill-down patterns defined

Agile Development (Sprints)

- Engineering is focused on short sprint cycles

- ML layer tested against real-world ERP data samples

- Edge cases surfaced early via sprint demos

QA & Testing

- Model adjustments based on observed real-data behavior

Launch & Support

- Post-launch monitoring for production edge cases

Results

Before

- ✕Finance teams relied on manual spot-checks and static rule-based validation.

- ✕Data entry errors regularly went unnoticed until the quarterly reconciliation cycle, creating expensive rework and audit risk.

- ✕Hardcoded thresholds generated excessive false positives, leading to alert fatigue and missed genuine anomalies.

- ✕Multi-table relationships were effectively invisible. Anomalies spanning across ERP modules went completely undetected.

- ✕No centralized view of data quality across modules. Each team reviewed its own silo.

After

- ✔ Multi-table anomaly detection across 5+ ERP modules simultaneously.

- ✔ Financial risks and exposure have been reduced significantly. The system flags suspicious patterns before they compound.

- ✔False-positive rate reduced by ~60–70% thanks to adaptive ML scoring.

- ✔Cross-table anomalies are now detected automatically, because the model was designed to understand relational data from the start.

- ✔ Compliance and audit readiness improved through consistent, documented anomaly review processes.

The Impact

-Verified Reviews

Our Reputation on Top Platforms

LITSLINK is consistently rated among the top AI and software development companies on Clutch, GoodFirms, and other industry review platforms. Client reviews highlight the team’s technical depth in machine learning and data engineering, clear communication throughout the engagement, and the ability to deliver production-grade AI solutions on schedule.

Have AI Project in Mind?

If you need a similar solution, talk to us about building an AI anomaly detection solution that works inside your ERP. Share your project details — our team responds within 48 hours.