Key Takeaways:

- The U.S. VR health maintenance market shows rapid growth, with possible growth to 46.40 billion by 2034.

- Today, hospitals are using these technologies to educate the new generation of clinicians, plan difficult operations, give pain and stress relief to the users, and treat mental disorders.

- The main disadvantage of this solution is the cost of its implementation in hospitals. However, the constant use of it within three years might reduce these costs.

- VR has already become a useful tool in the therapeutic sphere, and businesses need to understand that this system marks the future.

- These technologies should follow HIPAA rules to protect users’ sensitive data.

The days when we went under a knife, with mere humans performing a procedure, are gone. Virtual reality in healthcare is a real game-changer that will reshape people’s vision of future primary care procedures. The recent report posted by the Information Technology & Innovation Foundation suggests that VR/AR growth in the medical field in the USA will be at $46.40 billion by 2034.

The option to use this innovation for planning interventions, helping disease victims cope with painful procedures, and training professionals is a huge win for healthcare education. Also, using this product to help ordinary people understand procedures/treatment plans is adding another layer of confidence in this field. Computer-generated treatment is changing lives across the globe. Now, it’s literally saving lives, as well. Let’s check out how VR applications in therapy are making a positive impact on patients or treatment options.

VR or AR: What is the Difference?

Most people think that virtual/augmented realities are closely connected to the gaming industry. This is true. However, VR/AR innovations are highly used in medicine, providing significant help for both clinicians and their clients. AR uses a system to adjust an environment with fictional elements in real-time. So, users stay in their own apartments or rooms but can get artificial notifications.

VR technology in the healthcare industry takes a step further, transporting users into a fictional realm where they can learn, practice, or share new experiences without leaving their original space. There is also a system known as extended reality (XR), which combines AR and VR solutions to create new ways of treating diseases. In terms of innovations, VR offers more opportunities for primary care providers and beneficiaries. It is widely used in a global market and has already shown decent results. We figured out definitions. Now it’s time to find out how virtual reality is used in medicine.

What is Virtual Reality in Medicine?

The emergence of VR in healthcare can lessen the need for human trials. Med professionals, researchers, and manufacturers of equipment can create real-life situations in a computer-generated environment without the need for an actual human body. Here are a few areas where artificial solutions and med innovations are really making changes for both clinicians and ordinary people.

But Have No Idea How to Do It?

We Know Exactly Where To Start.

VR for Specialists’ Education

Med schools have gained a chance to give students not only the necessary knowledge but also the practice that young minds crave. Providing training in such an environment saves time, money, and space. Before students begin working with people, VR provides them an opportunity for a more immersive learning experience. Pairing VR headsets/software applications, students can get hands-on practice before they actually put their hands on a real person. According to the research conducted by Accenture, where they tried to train first-line clinical workers/trainees using this product, 70% of the users passed the test by performing a correct sequence of steps.

VR allows students/physicians to take a journey into a body, view neurological activity from within a brain, and practice complicated procedures. They are able to acquire firsthand experience and learn how the human body works. So, medical VR training is not science fiction but an ordinary thing these days. For example, students and surgeons at Lucile Packard Children’s Hospital Stanford are using a program called Surgical Theatre. This digital product allows one to take a look at the client’s computer-generated body and plan an operation beforehand. It allows for the safe conducting of epilepsy surgery, brain tumor surgery, complex skull base surgery, etc. Another use of computer-generated content in med school is a combination of animation with therapeutic content.

Students can pair software, like the Human Anatomy VR, with Oculus Touch controllers. Using these interactive controllers, headsets, and software programs, they can interact with each of the bones, muscles, ligaments, and tendons that create the human body. Information is displayed while users manipulate each part of the anatomy. Another program with which students can study the human body is the Dissection Master XR. The program allows a better understanding of human physiology and anatomy through the high-resolution visuals that can be depicted in 2D/3D forms. So, these programs are new training that may change the future of education for the better.

VR for Patients’ Education

In the same way that doctors/surgeons are using applications to prepare for surgery, patients are being given an opportunity to see a procedure, too, before it happens.

The same Surgical Theater that is used in Lucile Packard Children’s Hospital Stanford helps ordinary users, too, because this way they understand their condition and follow the steps a surgeon is going to take. It provides people not only with knowledge but also with a little relief. With VR medical headsets, patients can spend a few minutes understanding their diagnosis as well as their treatment plan.

VR for Alternative Therapy

Now we understand how virtual reality became an innovation in medicine, but we are still discovering its full potential. Healthcare innovation examples include a set of medical VR games that are meant to help in the fields of mental disorders, physical rehabilitation, pain management, and other therapies. Here are some recent ideas that have already changed people’s lives.

Pain Management

Pain Relief

SnowWorld is a universe created for people who suffer from severe burn injuries. In a game, users have to throw snowballs at penguins or snowmen inside a computer-generated icy tundra. The study showed that with such an immersive solution, the burn victims’ pain level reduced by 35%-50%. The Virtual Reality Pain Distraction Experience conducted research, which involved a focus group of fifty (50) users with a VR headset and a 15-minute experience called Pain RelieVR.

Gameplay focused on hitting objects with balls while wandering around a relaxing, fantasy world. There was no violence. There was no competition. There was also no need to physically move while wearing a headset. There were, however, motivational music, positive animations, and direct messages to keep a person engaged. All it took to play the game was a simple turn of the head. This type of study showed a clear indication that VR applications in primary care had the potential to help victims deal with pain and anxiety without the use of pain meds. Another similar study focused on VR’s ability to detract from a woman’s pain during childbirth without epidural usage.

Mental Disorders

Developers from Oxford created GameChangeVR, a simulated experience that helps people cope with psychoses and social isolation. A program transports users to ordinary places, like a bus or a shop, and helps them face everyday situations and fight off anxiety and stress.

Physical Rehabilitation

MindMaze is a program designed to help people who have suffered strokes regain their ability to move their upper/lower body. Doctors can manipulate a program in order to make it useful for individual clients.

Computer-Generated Treatment and HIPAA Compliance

HIPAA compliance is mandatory for all medical facilities. To deploy VR solutions, hospitals must implement the following data protection measures:

- Security & Encryption

Every VR system requires two-factor authentication. Access must be restricted to authorized clinicians and tech support. All patient data must reside in encrypted storage to prevent unauthorized access. - Patient Privacy:

Clinical staff must ensure that personal information remains hidden within the digital ecosystem during training or treatment sessions. - Regular Audits

Facilities must conduct frequent security reviews to identify vulnerabilities. These audits should specifically verify that all system updates align with HIPAA protocols.

Advantages/Disadvantages of Virtual Reality in Medicine

Virtual medical procedures seem like a bright future for those closely tied to the healthcare market. Despite all pros, there are also cons to consider.

High costs are the primary barrier.

VR hardware and specialized medical software require significant upfront investment. However, financial data justifies the expense: a 2018 PMC study showed that VR treatments saved hospitals an average of $5.39 per patient, while targeted technology groups helped save up to $98.49 per patient.

Implementation requires strategic planning:

- Hardware Selection

Choose equipment based on internal interviews with staff and patients to identify specific clinical needs. - Staff Training:

Clinicians must master the systems to create personalized treatment protocols using available software. - Developer Collaboration

Technical specifications should be finalized only after defining the medical goals.

Other implementation challenges include:

- Equipment Selection

Consult physicians and patients first to identify specific clinical needs. Only after defining these goals should you contact developers to select a hardware system. - Staff Adoption

Medical personnel require specialized training to master the equipment and develop personalized treatment protocols. - Technical Expertise

Despite the industry’s growth, finding developers capable of building high-performance, medically compliant VR software in healthcare remains a challenge.

As you can see, there are also many issues that will make clinicians change their minds about VR medical technology and turn back to traditional forms of treatment. So, let’s look at this issue by comparing the pros and cons of virtual reality in medicine and surgery.

Benefits of virtual reality in medicine

|

Benefit |

What it gives |

|---|---|

|

Risk-Free Clinical Training |

Surgeons can practice complex, life-critical maneuvers in a digital sandbox without any physical threat to actual patients. |

|

Immersive Rehabilitation |

Gamified environments boost patient engagement, significantly accelerating recovery of both motor and cognitive functions. |

|

Non-Pharmacological Pain Relief |

Technology acts as a powerful “digital analgesic” by redirecting the brain’s focus away from pain signals during painful procedures. |

|

High-Precision Diagnostics |

Health experts can interact with detailed 3D reconstructions of a patient’s anatomy, enabling more accurate pre-operative planning. |

Disadvantages of virtual reality in medicine

|

Disadvantage |

Risk it provides |

|---|---|

|

Substantial Initial Investment |

Implementing high-end systems and custom software development requires significant upfront capital from wellness facilities. |

|

Cybersickness Symptoms |

Users may experience nausea, dizziness, or spatial disorientation due to a sensory mismatch between their eyes and inner ear. |

|

Specialized Content Gap |

There is still a noticeable shortage of high-quality, evidence-based therapeutic simulations tailored to specific clinical protocols. |

|

Data Privacy & Security |

Storing sensitive patient data within cloud-based platforms introduces new vulnerabilities regarding HIPAA compliance and data leaks. |

Cost of Virtual Reality in Medicine

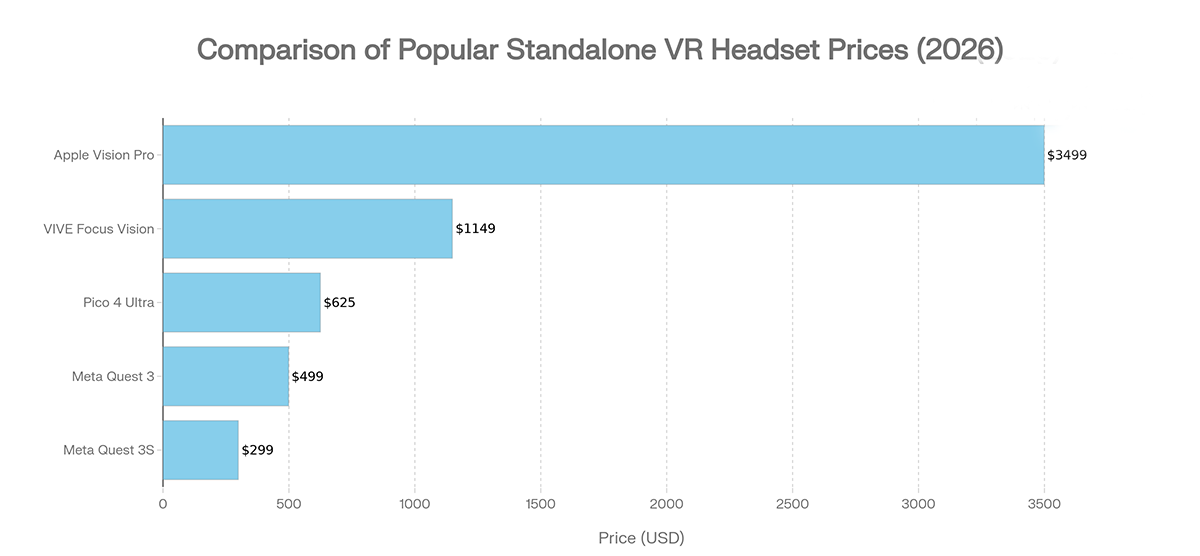

As we have already mentioned prices, it’s fair to talk about the cost of virtual reality applications in healthcare. The first thing to do is find out how much systems cost. So, an average price for one VR medical headset might be $1,200, depending on characteristics. In 2026, the most popular sets have the next price :

A live drill for one medical student costs $229.79 ($18,617.54 total), whereas a virtual experience costs $327.78 ($106,951.14 total). However, regular VR integration scales efficiently, eventually dropping the price to $115.43 per participant. What about program development? Here, you can find different prices depending on your needs.

|

App Type |

Feature Description |

Estimated Cost |

|---|---|---|

|

Basic App |

Simple functionality, standard UI/UX |

$10,000 – $30,000 |

|

Interactive App |

Object interaction and custom animations |

$30,000 – $80,000 |

|

Full Immersion |

Complete user immersion in a computer-generated environment |

$80,000 – $200,000 |

LITSLINK. We Implement VR in the Medical Industry

We are a company focused on finding the most effective tech solutions for businesses. Our experience extends not only to the medical field but also to e-commerce, fintech, learning, real estate, and more. We design solutions with the usage of VR, AR, AI, blockchain, machine learning, cloud storage, or more technologies. With over 300 people on the team, we can offer the most creative solutions for your businesses and provide constant maintenance for these solutions.

Who Will Help You to Launch a Primary Care App?Look No More!

Modern Medicine is Getting Virtual

Modern medicine is evolving through immersive technology. Virtual reality in healthcare creates endless opportunities for clinical innovation. If your facility requires advanced surgical training, non-pharmaceutical pain management, or anxiety relief for patients, it is time to develop a custom medical VR program. You provide the vision; we provide the technical execution. Ready to create something that could drastically change the lives of the others? Let us help. We can take an idea or a design. Make it become a reality. Let our VR team help you create a brand-new immersive experience for your users.

We Would Be Glad to Help!

FAQ

Q: How is VR being used in health protection?

A: Medical virtual reality becomes a common practice. This tool helps students study anatomy and attend simulated operations. Doctors use this technology to plan future operations. For ordinary people, it’s a good way to relieve anxiety or stress. Technology also helps in the rehabilitation/treatment of psychological diseases.

Q: Is VR covered by Medicare?

A: Yes, coverage is available for specific medical situations and uses, such as VR equipment designed for home-based cognitive behavioral therapy (CBT).

Q: What do doctors say about VR?

A: Medical professionals generally consider VR safe for moderate use. However, they warn that prolonged sessions may cause dizziness or eye strain.

Q: Is VR therapy FDA-approved?

A: Yes. The FDA has cleared several systems, including RelieVRx, as Class II medical devices for chronic pain management.

Q: What are the three types of immersive technology?

A: Augmented Reality (AR): Overlays digital data or objects onto the real world. Virtual Reality (VR): Transports users into a fully computer-generated environment. Extended Reality (XR): An umbrella term merging VR and AR into a unified experience.

Q: Do doctors use VR to practice surgery?

A: Yes. Surgeons use VR to simulate complex operations and plan procedures. It has become a standard tool for enhancing clinical skills and reducing surgical risks.