“Doing no harm, both intentional and unintentional, is the fundamental principle of ethical AI systems.”

Amit Ray, author

The artificial intelligence revolution is upon us. Like a double-edged sword, AI offers immense potential for growth – automating tasks, optimizing performance, and unearthing hidden opportunities. A recent McKinsey survey revealed a staggering 40% increase in planned AI investments fueled by advancements in generative AI (Gen AI).

However, the ethical pitfalls of AI systems loom large. Biases, privacy breaches, and reputational damage can cripple a company’s success. Today’s consumers are no longer passive bystanders. They actively seek out brands that prioritize transparency and ethical practices. Ignoring these concerns can lead to a devastating loss of trust and customer loyalty.

So, the crucial question remains: How can we leverage the power of AI responsibly, ensuring its successful and ethical integration within the business landscape?

This comprehensive guide dives deep into the core principles of responsible AI. We’ll navigate the path toward ethical AI implementation, empowering you to build trust with your customers and unlock the true potential of this transformative technology.

What is Responsible AI?

It seems like everyone knows the meaning of AI, but has no idea what responsible AI is. Therefore, we’d like to look into it to give a better idea of this concept.

Responsible (ethical, trustworthy) AI is a set of principles and practices intended to govern the development, deployment, and use of artificial intelligence systems regulated by ethics and laws. This can ensure that the technology causes no harm to employees, businesses, and customers, allowing organizations to build trust and scale with confidence. Simply put, when companies use AI systems to improve their operations and drive business growth, they should first build a system with predefined guidelines, ethics, and principles to regulate the technology.

How is AI responsibly used in business? Companies ensure complete transparency and interpretability, using artificial intelligence to perform many tasks such as automation, personalization, data analysis, etc. Whenever a company applies this technology, it requires an explanation to users about whether and how their personal data will be processed. This is especially important in healthcare, where medical professionals use AI systems to make a diagnosis. They have to provide documentation, so people can be sure it is correct.

Although the number of AI use cases in business is surging, their responsible use lags behind. Accordingly, companies are increasingly facing financial, regulatory, customer interaction, and satisfaction issues. How critical are responsible AI principles in software for business? We’ll find out in the next section.

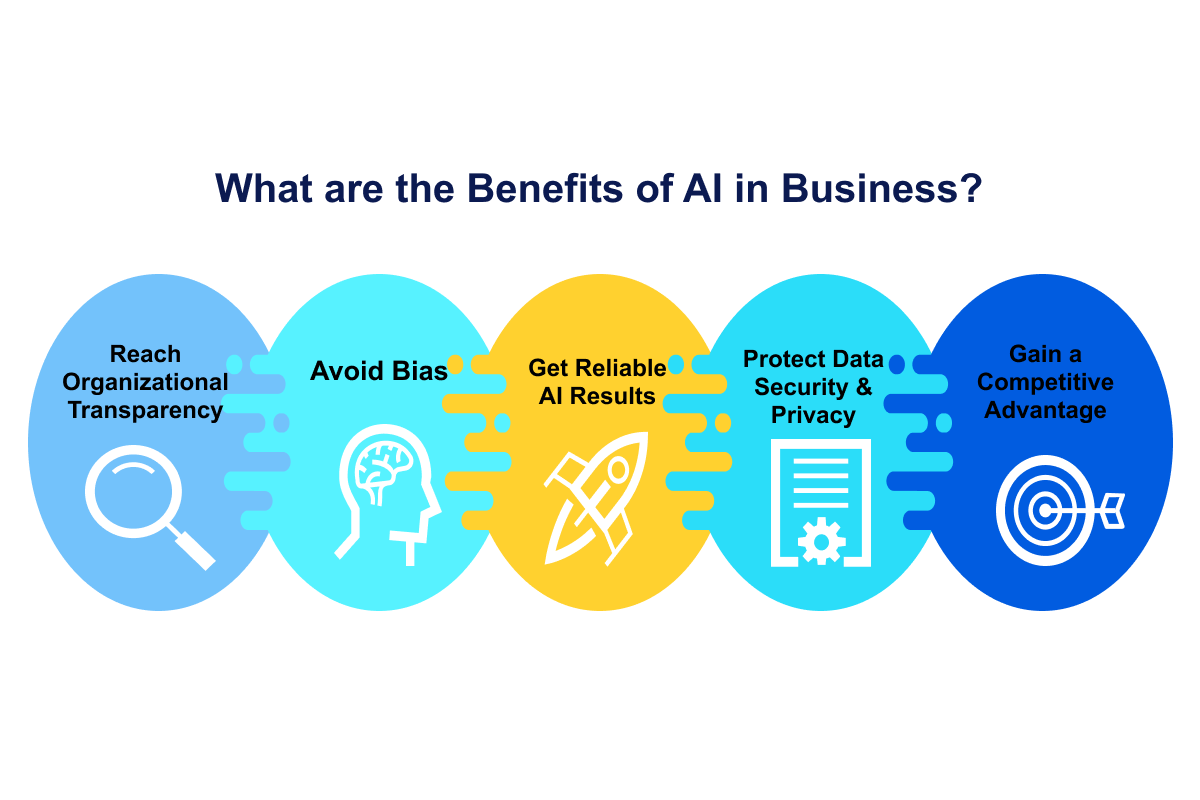

What are the Benefits of AI in Business?

When implementing AI technologies, organizations must ensure careful management to prevent significant damage that may be unintended but is detrimental to brand reputation, employees, and society in general.

An infamous example of the irresponsible use of artificial intelligence is Amazon back in 2014 when the company deployed an AI-driven recruiting tool to put the burden of screening initial candidates on the shoulders of technology. This went thoroughly wrong, as the tool was trained on male applicant samples and had an unintended bias against women. So during the hiring process, the AI simply eliminated all female candidates. Even worse, it took Amazon a full year to realize the mistake.

Let’s go into detail and discover how beneficial the ethical use of artificial intelligence in business operations can be. By taking a responsible approach, companies receive more perks, such as:

-

Avoid Bias

As in the AI business case of Amazon, we see the damage that can be done by a system trained on data that contains biases. Responsible AI principles help businesses build trust and ensure that algorithms do not produce false results. An examination of how the system works and what decisions it makes helps identify biases and avoid them in the future.

-

Get Reliable AI Results

To understand better the AI business impact, imagine applying this technology to processes in your company, but soon realizing that the results you get are not reliable. Artificial intelligence can process an immense amount of big data and make predictive analytics out of it. But before you accept these results as pure truth, you need to make sure they are verifiable by first checking the correctness of the AI work and only then letting it stand on its own.

-

Reach Organizational Transparency

Provide all information about the purpose, risks, and expected outcomes of using artificial intelligence in business operations. In this way, you can improve your brand image among the public and stakeholders. Responsible use of AI systems also means that your employees know how to use data and derive insights from it.

-

Protect Data Security & Privacy

Companies using artificial intelligence should place a high value on customer data security and protection. The principles of responsible AI make sure organizations do not collect user information and images without their consent. Their actions should be in line not only with ethics but also with data protection regulations such as the GDPR (General Data Protection Regulation) to prevent misuse.

-

Gain a Competitive Advantage

The responsible artificial intelligence’s impact on business assists organizations to be more competitive in the market. Since it allows you to collect and analyze secure business data, you make decisions based on reliable results. Moreover, AI systems are a great tool to automate everyday tasks (answering customer queries, categorizing documents, etc.) and to optimize a workload, improving the entire company’s performance.

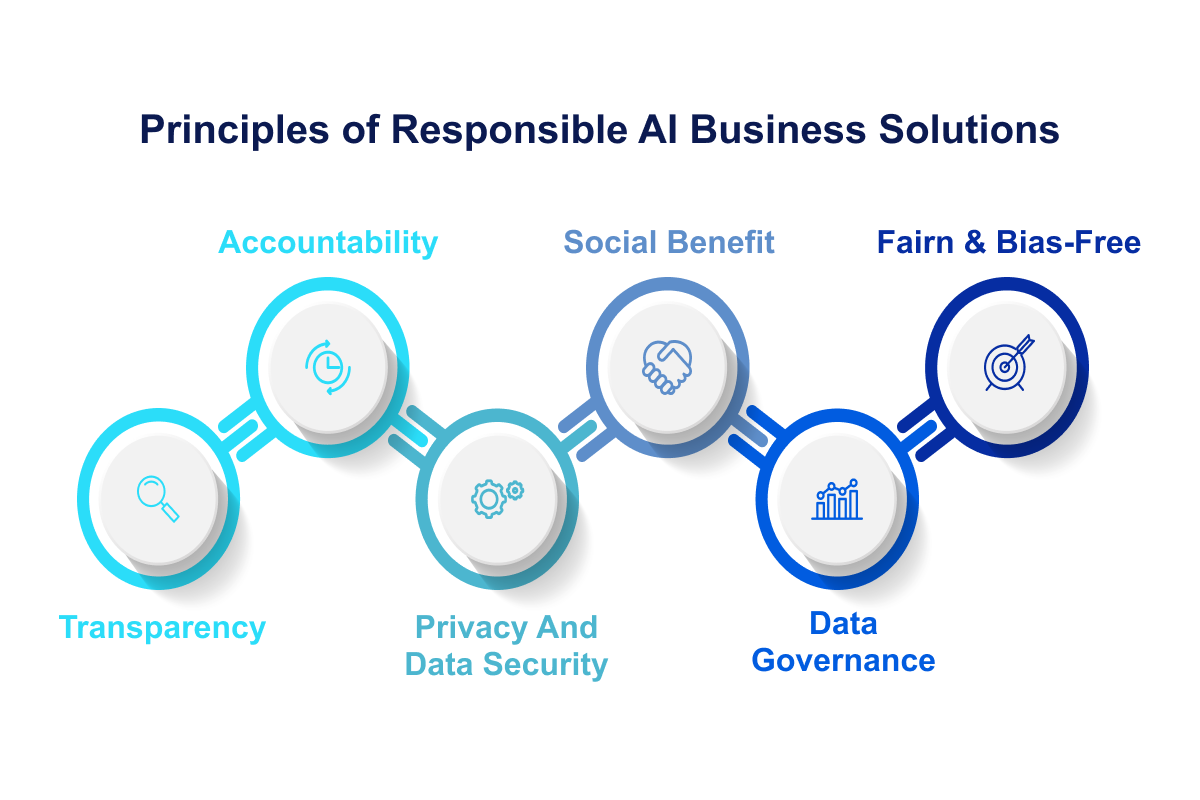

Principles of Responsible AI Business Solutions

If you plan to set off responsible AI business development, you should eliminate all the risks and dangers that the technology brings. Making your models transparent, safe, and secure will help you automate numerous tasks and scale your company. Below, we’ll go over the key responsible AI principles in business.

Privacy And Data Security

Since AI systems have access to a vast amount of data to recognize patterns, there’s a high risk of breaking people’s personal information. How can we secure the data used for deep learning in business?

To protect sensitive information, organizations must take additional data processing measures, including anonymization and de-identification, to ensure systems do not violate HIPAA (Health Insurance Portability and Accountability Act) and GDPR. To guarantee privacy and security when using AI systems in your organization, you should also do the following:

- Implement access control mechanisms;

- Create a data management strategy;

- Resort to training on AI systems.

Transparency

While adopting AI systems in business, it’s necessary to apply testing procedures and practices. Artificial intelligence systems are not able to explain how and why they achieved certain results. This lack of transparency and interpretability can undermine trust in AI tools.

Understanding the work of sophisticated neural networks is a tricky task, even for experts. However, there are several practices you can adopt to explain AI algorithms better:

- Clearly state what kind of data your AI systems use, what their purposes are, and what factors influence the final results;

- Document the behavior of your system;

- Explain to end users how the software works;

- Decide what explanation the system needs;

- Clarify how to fix the system’s bugs.

Accountability

If you’re using AI technology for business, be aware that this software involves the supply chain: data providers, technology providers, and system vendors. How can you prevent things from going wrong?

First things first, maintain meaningful control over systems. All parties involved in the development and deployment of AI solutions for business bear responsibility for the ethical consequences and abuses. It’s vital to assign roles and responsibilities — so everyone is accountable for adhering to pre-established trustworthy AI principles. The more autonomy you give the AI system, the higher the level of organizational accountability should be. The reason is that the consequences for public safety or health can be severe.

Fairness & Bias-Free

We’ve already mentioned the importance of unbiased and fair AI systems and now go into a little more detail. Simply put, technology needs to treat similar groups of people equally. Humans naturally tend to make prejudiced decisions, but we want machines to be smarter and fairer. The problem is that ML models learn from real-world data, which is often prejudiced in some way.

So, how can you use unbiased artificial intelligence for business? Here are several tips:

- Recognize how the technology behaves;

- Define how fair your model is;

- Research biases and why they occur in data;

- Test the data of those using the model repeatedly.

Social Benefit

The primary goal of this principle is to ensure that the use of AI technology in business adds value to people by solving problems and improving their lives in general. Without adherence to ethical principles, companies could make decisions that they believe will benefit them the most, even if they harm the well-being of others.

For example, researchers should consider how their work might affect all members of society, including those who are unable to participate in decision-making processes due to lack of access or other issues related to social inequality.

Responsible AI principles improve the world by examining past data and solving unexpected challenges. For example, some companies have used AI to help develop a better COVID-19 vaccine.

Data Governance

This principle implies that organizations receive and process information in compliance with data protection rights to avoid negative AI implications for business strategy. The information must be collected with consent and stored only as long as necessary for the original purpose (e.g., for clinical trials). In addition, the company must be clear about how long personal information will be stored and how it can be deleted from databases if this becomes necessary (e.g. if consent has been withdrawn).

The quality of data used is also important: it should be accurate and up-to-date. Companies are recommended to keep records of how they collect, store, and use information so they can demonstrate compliance with privacy laws. When using AI systems to process personal records, questions such as the following must be considered:

- Who has access to the data?

- What kind of data is collected and what can be done with it?

- Are people aware that they are being observed?

- How much transparency do people have about how their data is used and how accurate it is?

- What happens if something goes wrong?

Examples of Responsible Artificial Intelligence in Business

To follow AI trends responsibly, companies must establish clear principles by which they will use it. Unfortunately, most firms that deploy artificial intelligence do not handle it responsibly. Only 12% of companies are using AI in a mature way, which gives these brands a significant competitive advantage. Let’s take a look at some notable responsible AI examples in businesses:

-

IBM

IBM is a good example of responsible AI use cases in business at work. One of its most important contributions to responsible AI principles is the IBM Watson Health division. It’s part of the company’s strategic business unit that aims to solve some of the most immense challenges in healthcare and life sciences. The organization uses artificial intelligence to help doctors make better decisions about patient treatment.

How does it work? IBM Watson Health deploys artificial intelligence to analyze data from sources such as electronic health records, clinical entries and lab results. The program then provides insights that help medical professionals make better treatment decisions for their patients while optimizing the responsible use of AI. In addition, this data helps doctors learn from the experiences of others and improve their practices over time.

-

H2O.ai

H2O.ai gives an answer to the question “How to implement AI in business and act responsibly?” It uses artificial intelligence to solve real-world problems — from detecting health risks in water systems to predicting when a customer might churn on their credit card payments. By applying responsible AI principles, the company aims to make it easier for businesses of different sizes and industries to build trust with customers and offer safe products.

The company’s cloud-based H2O AI Cloud platform can be used to create, train, and deploy machine learning models that are compliant with GDPR regulations. It enables organizations to analyze their data and ensure it is handled in compliance with privacy laws.

This responsible AI technology has already been adopted by more than 37% of enterprises around the world that want to use artificial intelligence without breaking laws or regulations.

-

Diveplane

Founded by two Stanford Ph.D. students who were tired of seeing companies abuse artificial intelligence. They are building a platform that allows all businesses to harness the power of technology and use it to their advantage.

Diveplane’s technology enables companies to develop, train, and deploy their own customized machine learning models. It can be used in any industry, including retail, finance, travel, and more. The organization’s mission is to create an environment where ambitious entrepreneurs can thrive, as they believe this will lead to breakthroughs in AI innovation that benefit everyone in the world.

-

FAIRLY

FAIRLY helps organizations analyze artificial intelligence algorithms and develop more ethical, responsible, and compliant AI systems. To achieve this, they provide tools that provide a better understanding of how their AI systems work and identify potential biases and unfairness to adjust models accordingly. FAIRLY provides a set of APIs for training, testing and evaluating machine learning models for fairness evaluation in the cloud.

A recent report from the Center for Data Innovation found that only 20% of companies have policies in place for AI system development. The vast majority — 80%— have none.

FAIRLY provides a set of best practices for building responsible AI systems, including:

- Trained data scientists must analyze and monitor new AI models before deployment;

- Test AI systems with real-world datasets to ensure they perform as expected;

- Remove biased data from training sets when discovered.

-

Fiddler

Fiddler aims to bring transparency to machine learning so that businesses can make more informed decisions about their data and its usage. The organization enables firms to build trustworthy AI business solutions by making it easy to understand how algorithms work, how to interpret them, and how to explain them to others.

By building transparency into the core of its platform, Fiddler helps enterprises gain the trust of their customers, employees, and partners. The company’s Explainable AI Platform makes it easy to gain insights from machine learning models and visually explore data and models to understand why they make certain decisions, gain confidence in them, and use the software more effectively in business.

Conclusion

AI, like most of our current technologies, cannot be placed on a pedestal and must also reap the benefits of moral and ethical guidance. Software must accommodate our ambition to accelerate the rate at which computers can learn complex problems in a way that’s fair and compliant with the rules. Applying responsible AI principles in business is something all companies should strive for to outperform their competitors.

If you want to avoid cognitive biases and ensure that your artificial intelligence systems are responsible, you should apply the above principles to keep your AI business software in check. Don’t get carried away with technologies that are beyond your control, and realize that your artificial intelligence system will not change the world — you will. In case you’re looking for a team of experts to ensure you are using artificial intelligence software ethically, contact us — we’ll bring our expertise and best practices to your project!