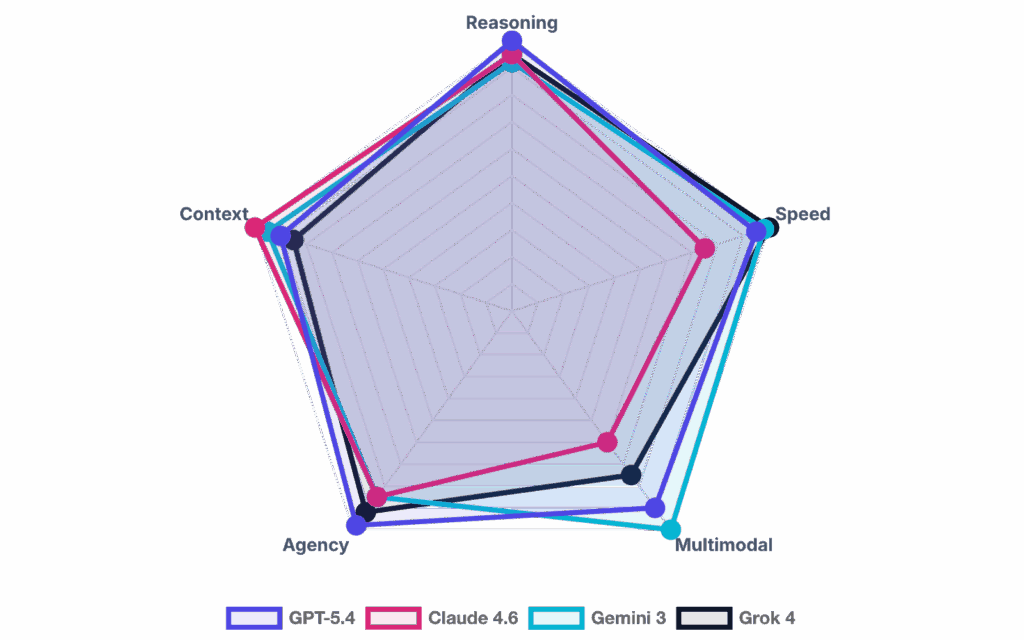

The AI market shifts faster than most product roadmaps. A tool that led benchmarks in Q1 is outpaced by Q3, and the noise makes it genuinely hard to know what’s worth your engineering hours.

This isn’t another roundup of flashy demos. We broke down the most advanced AI systems by what they actually do under pressure: writing and reviewing production code, automating workflows, scaling content operations, and supporting product decisions at speed.

Key Takeaways

- There’s no single “best” AI – only the right AI for your use case.

- Agentic AI is the defining shift of 2026 – and it changes how teams are structured.

- Speed and cost matter as much as raw intelligence.

- Enterprise AI adoption hinges on governance, not just capability.

- The biggest competitive advantage is shipping workflows, not running demos.

How Litslink Evaluates AI Systems in Practice

It started with a disagreement in a code review.

Our senior engineer had used GPT-5.3 to build an authentication module for a fintech client. Clean code, passed the linter, looked great. It also had a token expiry flaw that would’ve only surfaced under specific production load — the kind of bug that slips through every standard test. The engineer running Claude Opus 4.6 on the same task in parallel caught it. Not because Claude is the smartest AI right now in some abstract sense, but because Opus 4.6’s extended reasoning traced the full token lifecycle before writing a single line.

That gap doesn’t show up in any benchmark.

We’ve run this kind of head-to-head across three client projects since — People Counter, Goods Demand Forecasting, ShiftRx, and a high-volume content pipeline. Same task, different models, real outcomes measured. Here’s what we found:

| Project | Task | Models Tested | Winner | Why |

| People Counter | Contract clause extraction from 200+ page docs | GPT-5.2 vs Claude Opus 4.6 vs Gemini 3 Pro | Claude Opus 4.6 | Lowest hallucination rate on legally sensitive content; 1M token context handled full contracts without chunking |

| Goods Demand Forecasting | Automated test generation from the existing codebase | GitHub Copilot Agent vs Devin | Copilot Agent (with Devin for cleanup) | Copilot faster on greenfield tests; Devin better at legacy refactor and coverage gap analysis |

| ShiftRx | High-volume personalized content at scale | GPT-5.2 Instant vs Gemini 3 Flash | Gemini 3 Flash | 37% lower cost per 1M tokens at comparable output quality; latency held up under concurrent requests |

| DevOps Services for IoT | IDE workflow, daily coding assistance | Cursor + Claude vs GitHub Copilot | Cursor + Claude | Plan Mode + persistent rules reduced context re-explanation across sessions |

Approaches to Measurement

To clarify what we’re evaluating and why it matters, traditional techniques like the Turing Test, cognitive exercises, ML metrics (accuracy/F1), and human review are still helpful.

That’s why we rely on the model scoreboard approach used by Artificial Analysis: their models page compares systems across quality/intelligence, price, output speed, latency, context window, and more. In other words, it’s not just “who is the smartest AI,” but “which of the top AI models is actually usable.”

Here’s the simple framework we use:

| What we measure | What it answers | Why it matters in real life |

| Intelligence (composite) | “Which AI is the best at hard tasks?” | Avoids ranking by a single cherry-picked benchmark |

| Latency & speed | “How fast does it feel?” | UX + agent reliability depend on responsiveness |

| End-to-end time | “How long to get a usable answer?” | Captures input processing + “thinking” + generation |

| Price (blended) | “What does this cost per outcome?” | Determines if a “most powerful AI” is practical |

| Context window | “How much can it handle at once?” | Big docs, repos, and RAG workflows need room |

On the metrics side, Artificial Analysis defines Time to First Token (TTFT), Time to First Answer Token (after any thinking), and End-to-End Response Time (e.g., seconds to output 500 tokens), which together describe “how fast” a model actually feels. For cost, they often use a blended price assuming a 3:1 input-to-output token mix (handy for comparing chat, RAG, and content workloads fairly).

AI development services evolve from year to year to overcome limitations and expand the technology. Keeping the measurement methods in mind, let’s proceed to the examples of the smartest AI systems ever.

14 Cutting-Edge Examples of AI Systems to Watch out for in Early 2026

Artificial intelligence and machine learning are transforming the way we live and work, and the possibilities for their use are virtually endless.

Here are the most powerful AI tools currently available to enterprises. This analysis explores the most sophisticated AI architectures across four primary functional fields and integrates real-world performance metrics with emerging industry trends.

Field 1: Text & Knowledge Work

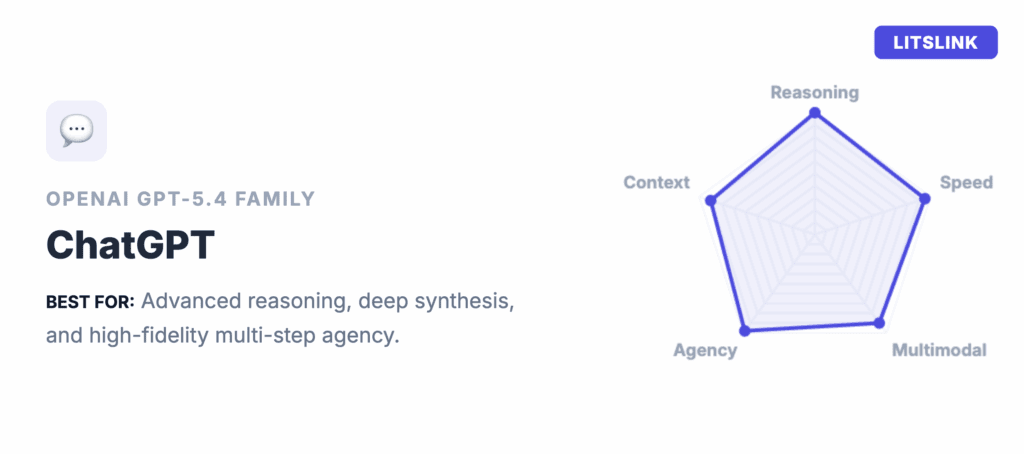

1) ChatGPT (OpenAI) – GPT-5.4 Family

What it is: A general-purpose AI assistant for writing, analysis, and research. GPT-5.4 includes specific variants like Thinking and Pro.

Best for:

- Structured writing like briefs, specs, emails, and proposals

- Research synthesis with citations and a deep learning process

- Long-document analysis and extraction

Why it’s advanced in early 2026: Released on March 5, 2026, GPT-5.4 pushed the benchmark bar noticeably higher. The thinking model matched or outperformed human professionals on 83% of knowledge-work tasks – a meaningful jump from the 70.9% that already turned heads when GPT-5.2 launched.

What shifted the picture most dramatically, though, is native computer use. GPT-5.4 is the first mainline OpenAI model that can read a screen and issue mouse and keyboard commands directly, without leaning on a separate specialized model. On OSWorld-Verified, which gauges desktop navigation through screenshots alone, it scored 75.0% – edging past the measured human baseline of 72.4%. The deprecation of older ChatGPT models shows that what counts as the best AI system shifts quickly.

Technical Performance of GPT-5.4 Models

| Benchmark Metric | GPT-5.4 Thinking | GPT-5.4 Pro | GPT-5.2 Thinking |

| GDPval Win/Tie Rate | 83.0% | 82.0% | 38.8% |

| SWE-Bench Pro Accuracy | 57.7% | N/A | 50.8% |

| BrowseComp (Web Research) | 82.7% | 89.3% | 65.8% |

| OSWorld-Verified (Computer Use) | 75.0% | N/A | 47.3% |

| GPQA Diamond (Science) | N/A | N/A | 92.4% |

The GPT-5.4 family raises the bar on context, too. The API version now supports up to 1 million tokens, which puts a completely different class of document work within reach. And accuracy held its ground as the window grew. Hallucination rates fell further compared to 5.2: individual factual claims are 33% less likely to be wrong, while full responses are 18% less likely to contain errors overall. For professionals dealing with contracts, technical reports, or multi-source research, that reduction is genuinely noticeable.

Another specialized tool is Prism, which targets the scientific community. Prism allows researchers to turn whiteboard photos into LaTeX code. It maintains “deep context awareness” of entire academic papers. This allows the AI to check if conclusions match data in specific figures. GPT-5.4 takes this further with native computer use. The model can now interact with desktop software directly, making it genuinely useful for scientists navigating multiple tools at once.

Read about ChatGPT’s Accuracy in our article.

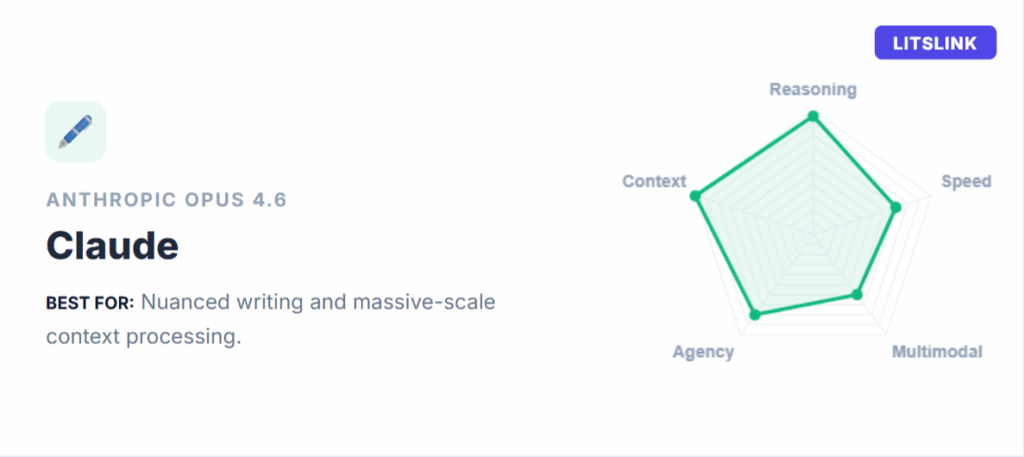

2) Anthropic – Claude Opus 4.6

What it is: A flagship large-language model focused on strong reasoning, coding support, and sustained agentic work.

Best for:

- High-quality writing with consistent tone

- Long-context analysis of large documents and knowledge bases

- Code review and debugging alongside text work

Why it’s advanced in early 2026: Anthropic released Claude Opus 4.6 in February 2026. It is described as the world’s most intelligent AI for coding and agents. The beta 1M token context window offers a major advantage for “dump the repo and reason over it” workflows. This is the smartest AI right now for handling massive context.

Claude Opus 4.6 leads the industry in agentic planning. It can break complex goals into independent subtasks. These tasks are then executed in parallel by “Agent Teams”. This coordinated effort is a massive leap for productivity. It is especially effective for reading large codebases or legal libraries.

Some investors even feared that Claude’s advanced AI would replace traditional software subscriptions. This event was dubbed the “SaaSpocalypse” by market traders.

Opus 4.6 includes a feature called adaptive thinking. This allows the model to decide when deep reasoning is necessary. Anthropic also launched Claude in Excel and PowerPoint. It can generate full presentation decks that adhere to brand templates.

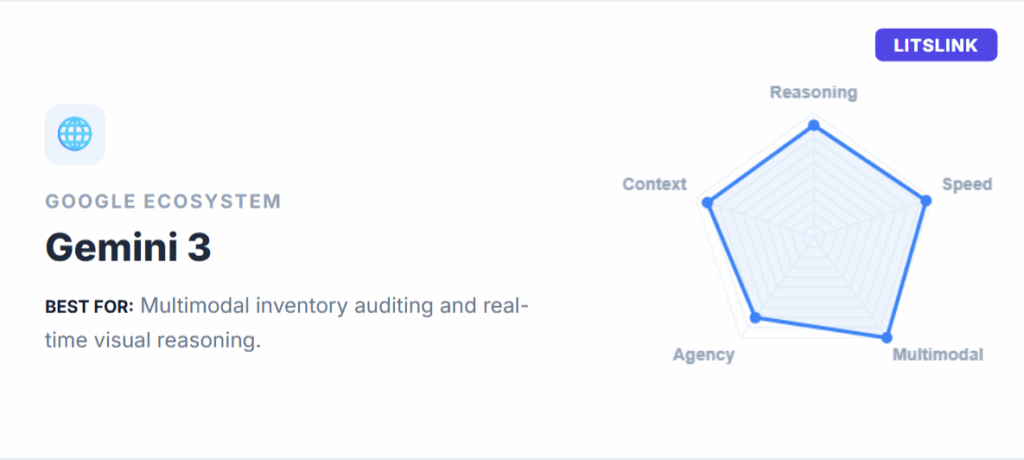

3) Google – Gemini 3 (Gemini App + API Ecosystem)

What it is: Google’s multimodal model family and app ecosystem. Tightly connected to its product surface area.

Best for:

- Ecosystem-integrated knowledge work

- Agentic coding; tool-use workflows

- Deep research synthesis leveraging infrastructure

Why it’s advanced in early 2026: Positioned as the agentic coding leader, this system processes 1 trillion+ tokens daily. The Gemini 3 Pro variant excels at complex scientific reasoning, while Gemini 3 Flash prioritizes speed.

Natively multimodal from inception, it simultaneously synthesizes text, video, audio, plus code, making it a premier research tool for speech-to-text transcription and other multimodal workflows. A “Deep Think” mode addresses complexity by resolving bottlenecks in mathematics and economics, including open problems in the Erdős Conjectures database.

Gemini 3 Pro vs. Flash Comparison

| Technical Spec | Gemini 3 Flash | Gemini 3 Pro |

| Input Price (1M) | $0.50 | $2.00 – $4.00 |

| Latency | 0.21 – 0.37s | 0.5 – 1.5s |

| SWE-Bench Score | 78.0% | 76.2% |

| GPQA Diamond | 90.4% | 91.9% |

| Context Window | 1M Tokens | 2M Tokens |

Plus, Google has integrated Gemini 3 into its Workspace ecosystem. It can search Drive, Docs, & Gmail to answer queries. This integration makes it the best AI program for businesses using Google tools.

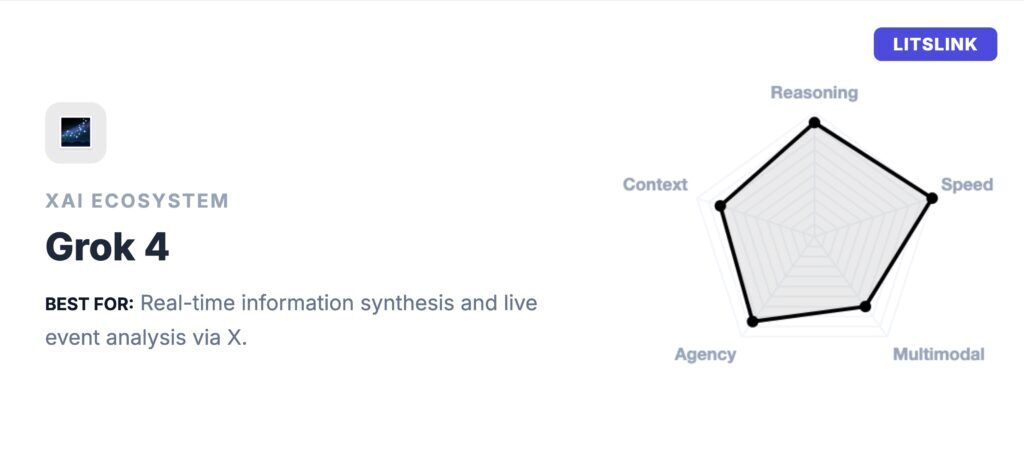

4) xAI – Grok 4.1

What it is: xAI’s flagship reasoning model, built with native access to real-time X platform data. While most advanced AI systems work from a fixed knowledge snapshot, Grok 4.1 continuously pulls live information from X alongside standard web search.

Best for:

- Teams that need live market signals, breaking news synthesis, or real-time competitive intelligence

- Complex multi-step reasoning over long documents, with up to a 2M token context window in Grok 4 Fast

- Financial research, investment thesis development, and analysis tasks where yesterday’s data changes the answer

Why it’s advanced in early 2026: When Grok 4 launched in July 2025, xAI trained it using 100x more compute than Grok 2 and 10x more reinforcement learning than Grok 3. That pushed the model to PhD-level performance across all academic disciplines simultaneously – scoring near-perfect on the GRE and a perfect SAT. By November 2025, Grok 4.1 arrived as a production upgrade: hallucination rates dropped 65% relative to its predecessor (from 12.09% down to 4.22%), and in blind A/B testing, users chose Grok 4.1 over Grok 4 more than 64% of the time.

What actually sets Grok 4.1 apart from the other top AI models in this category isn’t benchmark scores – it’s the live data layer. GPT-5.2 and Claude Opus 4.6 work from fixed training snapshots. Grok 4.1 can synthesize breaking news from X, leaked earnings calls, and live regulatory updates inside the same reasoning context, without a separate retrieval step.

For analysts, product teams tracking competitors, or anyone whose research depends on what’s happening right now rather than what happened last quarter, that’s a genuine structural advantage. On the Artificial Analysis Intelligence Index, Grok 4 scores 42 against a median of 27 for comparable models – placing it well above average in the strongest AI tier for reasoning tasks.

Grok 4 vs. Grok 4 Fast: Key Specs

| Technical Spec | Grok 4 | Grok 4 Fast |

| Input Price (1M tokens) | $3.00 | $0.20 |

| Output Price (1M tokens) | $15.00 | $0.50 |

| Context Window | 256K tokens | 2M tokens |

| Avg. TTFT | 11.89s | ~1s |

| Intelligence Index | 42 (median: 27) | Lower |

The tradeoff between the two variants is worth understanding before you commit. Grok 4 (the full reasoning model) is slower (average TTFT of nearly 12 seconds) and noticeably more expensive.

For exhaustive research synthesis or complex multi-document analysis, Grok 4’s thoroughness pays off. For quick turnaround tasks, Grok 4 Fast handles the job at a fraction of the price and with a 2M token context window to boot.

One limitation worth flagging honestly: multimodal capabilities still trail GPT-4o and Gemini 3. Vision support exists, but it’s not the primary reason to select this model. The Agent Tools API (web search + code execution) is solid and well-documented, but the xAI ecosystem around Grok is still maturing compared to OpenAI or Google’s tooling depth.

For pure text reasoning with a live data layer, though, Grok 4.1 is a serious option.

Field 2: Coding and Engineering

5) GitHub – GitHub Copilot (Agent Mode + AI Coding)

What it is: Copilot has evolved beyond autocomplete into artificial intelligence workflows. This advancement is underpinned by a deep understanding of machine learning algorithms enables the system to plan tasks, modify files, and manage PR/review cycles.

Best for:

- Teams already living in GitHub and VS Code

- PR generation; code review assistance; test generation

- “Delegate the boring parts” while keeping developers in control

Why it’s advanced in early 2026: GitHub now features an autonomous “Agent Mode” in VS Code. In this mode, Copilot determines which files to edit. It offers terminal commands to complete complex tasks.

The January 2026 release of VS Code v1.109 allows developers to run Claude and Codex models side by side. This shift toward comprehensive artificial intelligence services allows users to “delegate the boring parts” while maintaining developer control.

New Capabilities in GitHub Copilot 2026

| New Feature | Description | Developer Benefit |

| AI-Driven Mode | Autonomous file editing and execution | Completes complex tickets alone |

| Parallel Subagents | Runs multiple tasks simultaneously | Faster project builds |

| GPT-5.3-Codex | Unified generation and reasoning model | 25% faster generation |

| Workspace Priming | Proactive rule recognition on file open | Automates formatting instantly |

| Web Access Tools | Retrieves content from URLs | References the latest documentation |

OpenAI launched GPT-5.3-Codex specifically for GitHub Copilot. This model combines general-purpose reasoning with deep code training. It is the smartest AI for interactive coding and bug fixing, scoring 55.6% on SWE-Bench Pro.

While Copilot is a general-purpose tool, its advanced reasoning is particularly effective for teams building specialized, high-stakes software, such as:

- FinTech: Streamlining secure financial software development through automated bug analysis.

- HealthTech: Accelerating the creation of compliant healthcare software solutions using parallel subagents for faster testing and validation.

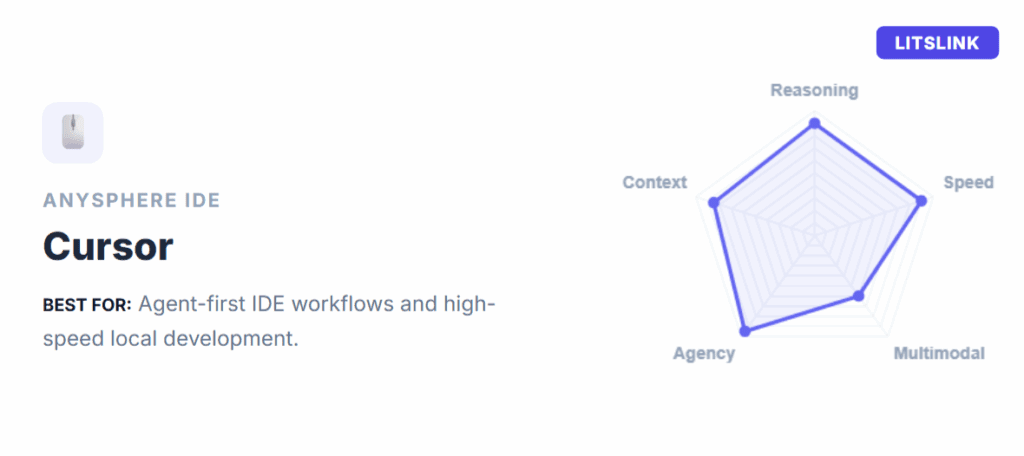

6) Cursor (Anysphere) – AI-Native IDE Workflows

What it is: Cursor is a coding environment built around the “plan-edit-review” loop, widely regarded as the best AI software for rapid iteration.

Best for:

- Fast iteration in medium-to-large codebases

- Developers who want a tight loop: explain, change, verify

- Organizations adopting an AI-first editor workflow

Why it’s advanced in early 2026: Cursor documents agent-centric workflow design, including planning, execution, and review. Teams using Cursor can define “Rules” (e.g., tech stacks) in their file system.

For example, a rule might mandate the use of Tailwind CSS. The artificial intelligence sees these rules before writing, ensuring adherence to project standards.

Cursor also supports “Agent Skills” via SKILL.md files. These allow the system to load custom commands and hooks. This transforms the model from a text generator into a task executor. Developers report AI-assisted development moves twice as fast, making it the most sophisticated AI for ‘vibe coding’.

Practical Tips for Cursor Users:

- Start big features in Plan Mode to create durable artifacts.

- Use @git to include recent commits in the context window.

- Check your rules into git so everyone benefits.

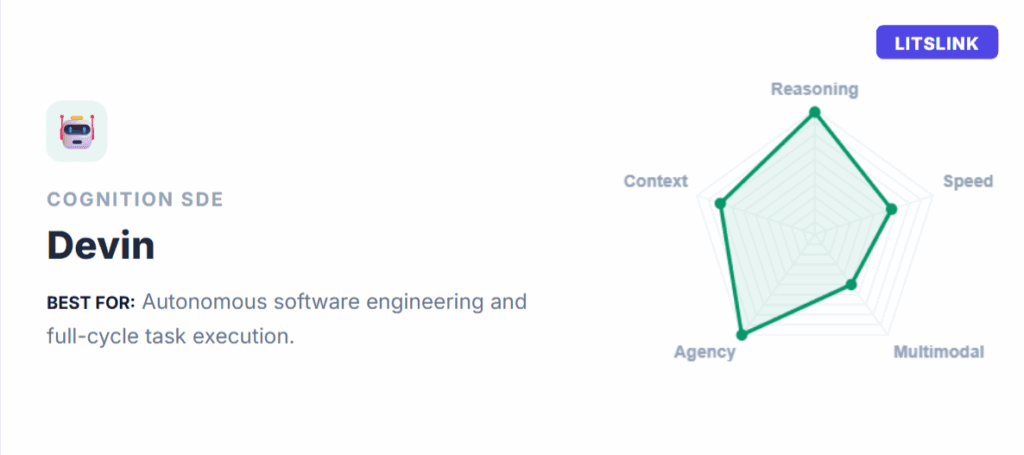

7) Cognition – Devin

What it is: A collaborative AI teammate designed to take on engineering tasks end-to-end. It can understand a goal, plan the steps, & ship a pull request. Major firms like Infosys and Cognizant have deployed Devin at scale. They use it to redefine junior software engineering roles.

Best for:

- Backlog cleanup, including small-to-medium tickets, test coverage, & documentation updates

- Repetitive engineering tasks like migration scripts and minor refactors

- Groups that can define a clear “definition of done” or review procedures

Why it’s advanced in early 2026: Cognition launched “Devin Review” to prevent low-quality code, helping engineers understand complex PRs by augmenting human attention with artificial intelligence.

Devin also excels at difficult legacy migrations. It can move systems from .NET Framework to .NET Core in two weeks. This process usually takes months for human teams.

The Devin AI deployment signals a pivot toward fully autonomous workflows, reducing context switching and increasing staff satisfaction. Devin is the world’s strongest AI for backlog cleanup.

Field 3: Generative Media (Image, Video, Audio)

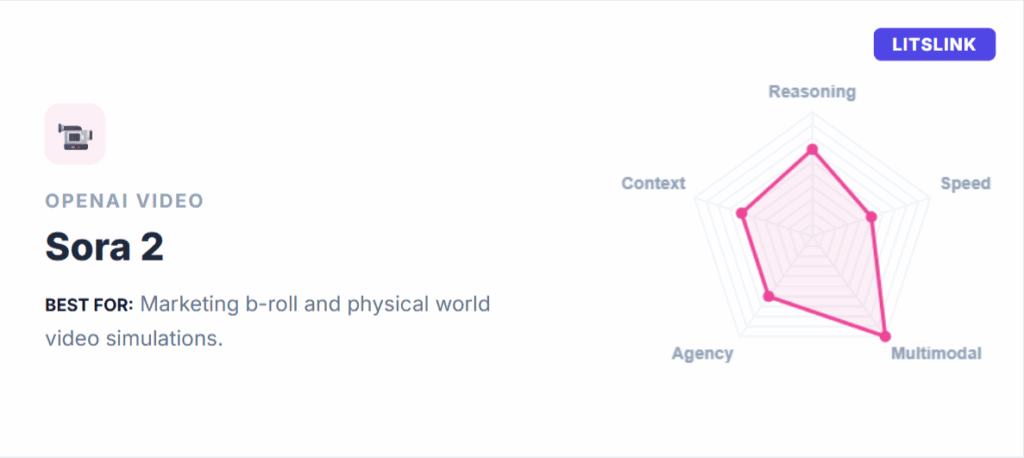

8) Sora 2 (OpenAI)

What it is: A video and audio generation model accessed through the Sora product experience.

Best for:

- Marketing content prototypes, concept videos, storyboarding

- Short-form creative exploration with high iteration

- Teams that need fast “draft-to-visual” workflows

Why it’s advanced in early 2026: OpenAI’s Sora 2 is the most advanced AI in the world for video. It can generate videos lasting fifteen to twenty-five seconds. This is a massive improvement over the original version. It features physically accurate motion and synchronized dialogue. The system understands the relationship between visual content and sound. It creates ambient effects that perfectly match the on-screen action.

A defining feature of Sora 2 is its $1 billion partnership with Disney. This allows the AI to generate videos featuring licensed characters. It provides proper intellectual property protection for brand campaigns. This signals a shift toward regulated and licensed AI content. For filmmakers, Sora 2 is the smartest AI for expansive world-building.

Sora 2 excels at complex camera movements, such as dolly zooms. It feels like the work of a professional cinematographer. It maintains physical permanence across frames. If a character bites a cookie, the bite remains visible.

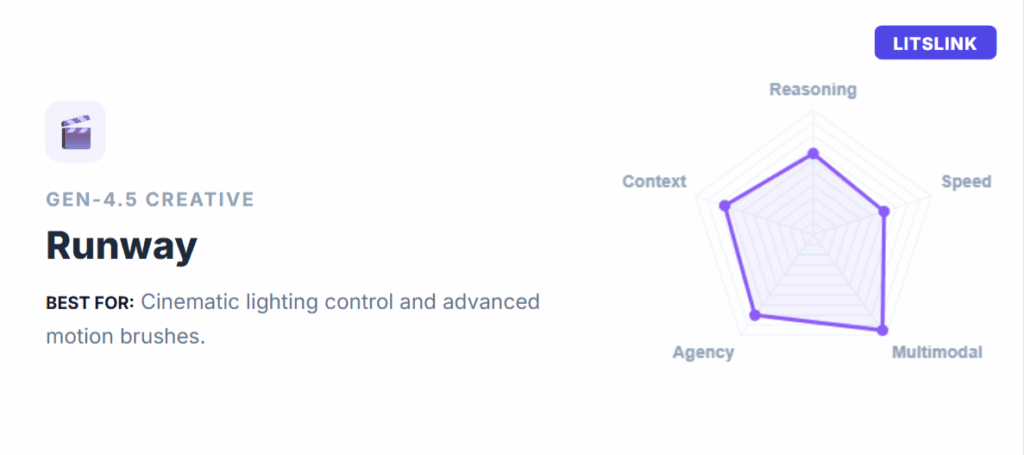

9) Runway Gen-4.5 (Runway)

What it is: A high-end video generation model positioned around motion quality, fidelity, and creative control. Runway Gen-4.5 is a top-rated video model for cinematic quality.

Best for:

- Creative teams that need cinematic video generation with more control knobs

- Rapid pre-production, including visual ideation, style exploration, and short sequences

- Agencies building repeatable workflows for clients

Why it’s advanced in early 2026: It ranks #1 on the Artificial Analysis Text to Video Leaderboard. The model outperforms competitors in blind human evaluations. It focuses on motion quality, prompt fidelity, and visual detail. It allows for granular directorial control through detailed camera instructions.

Gen-4.5 is built and optimized on NVIDIA Hopper and Blackwell GPUs. The model maintains coherence in fine details like skin texture. It handles layered objects and dynamic lighting transitions naturally.

Insights for Runway:

- Use Gen-4.5 for “short, sharp” hero shots in social ads.

- Leverage “Motion Brushes” to paint specific areas of movement.

- Generate high-impact transitions for brand film emotional beats.

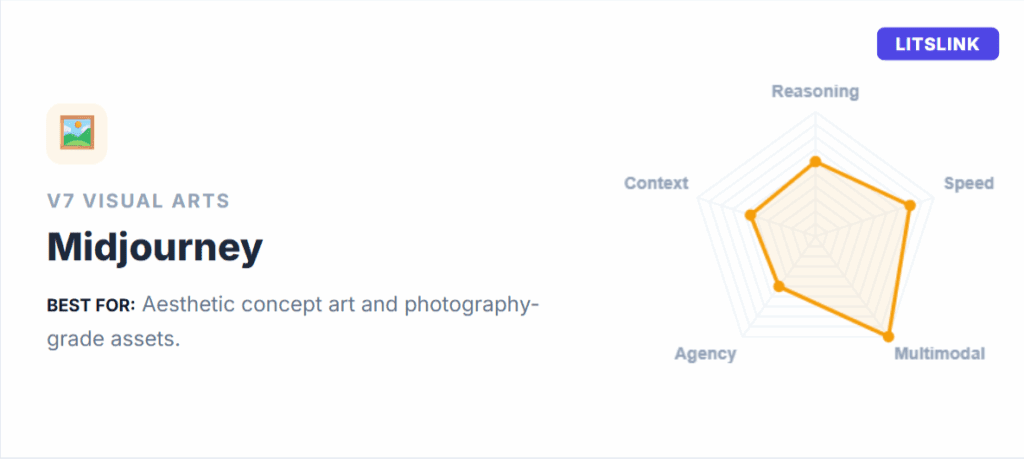

10) Midjourney V7 (Midjourney)

What it is: An image generation system known for high aesthetic quality and strong creative outputs.

Best for:

- Brand and marketing visuals, including concept art, product scenes, and style exploration

- Fast visual ideation for UI/UX and campaigns

- Creating a “visual direction” quickly, before production

Why it’s advanced in early 2026: Midjourney V7 was released in April 2025 and quickly became the default model. V7 is the first model to enable personalization by default. This allows the AI to learn what the user finds beautiful.

A new “Draft Mode” allows for rapid iteration at 10x speed. This mode transforms the web interface into a “conversational mode”. Users can verbally instruct the AI to change elements.

For example, they can swap a cat with an owl using voice commands.

The model also features “Omni Reference” for improved accuracy. This helps maintain consistency across items such as logos and specific objects. Midjourney is also expanding into 3D content production. It uses “NeRF-like” modeling for immersive content.

Field 4: Agents and Automation

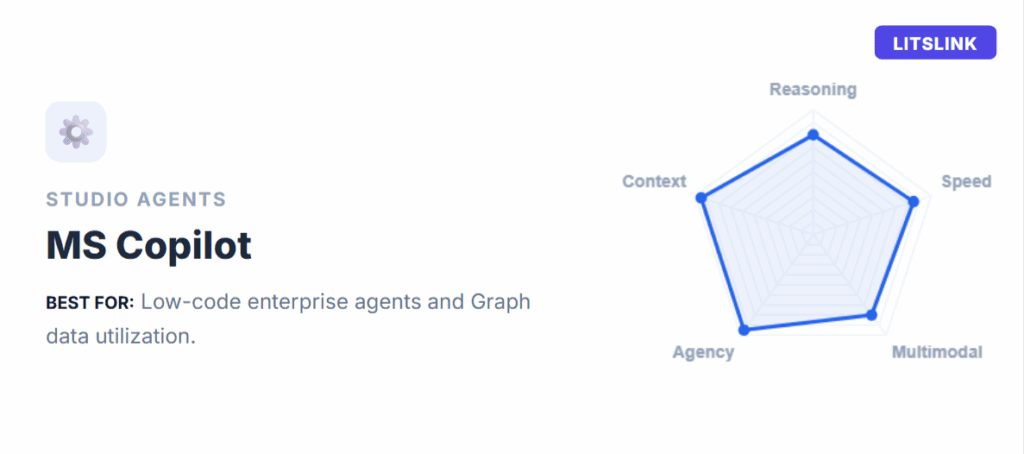

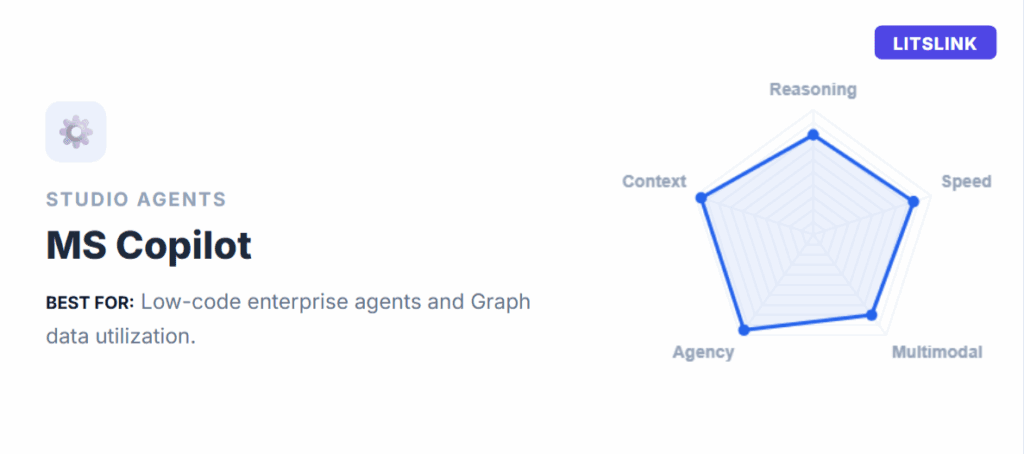

11) Microsoft – Microsoft Copilot Studio

What it is: Microsoft Copilot Studio is a platform used by over 80% of the Fortune 500 to build and govern artificial intelligence inside enterprise workflows.

Best for:

- Enterprises building internal AI for support, IT ops, HR, and finance operations

- “AI plus governance” scenarios, including logs, admin control, and lifecycle management

- Automations operating without modern APIs

Why it’s advanced in early 2026: The platform recently introduced “computer use” capabilities, allowing models to interact with Windows computers as humans do. They can select buttons and enter text into legacy apps. This is vital for systems without modern APIs.

Organizations turn natural language intent into automation. Sales and HR leaders can build assistants to own end-to-end workflows without technical help. For example, an AI system can autonomously manage employee onboarding.

Microsoft emphasizes that human-AI teams are growing globally. Copilot Studio provides tools to track model ROI and performance. It protects enterprise data using Zero Trust principles. This ensures that the world’s most powerful AI remains secure.

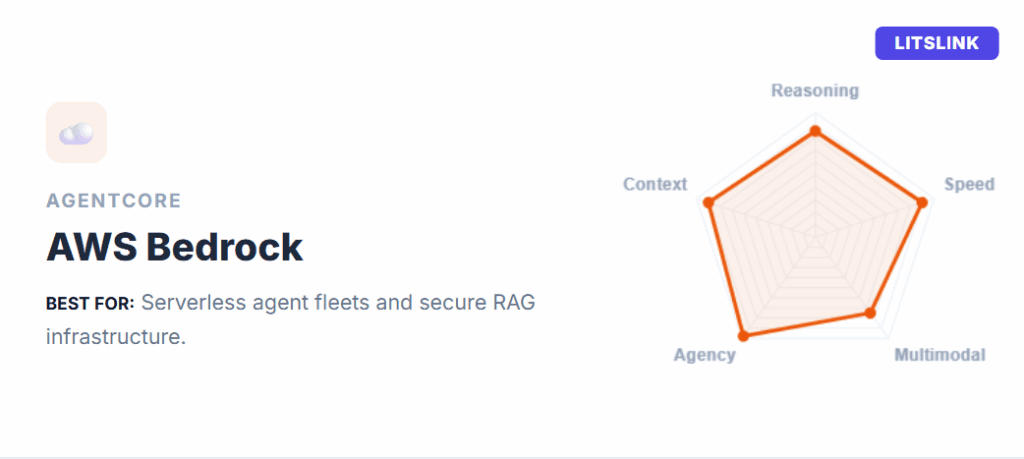

12) Amazon Web Services – Amazon Bedrock Agents (+ AgentCore)

What it is: A framework and managed service to build artificial intelligence that orchestrates foundation models, calls APIs, and uses knowledge bases.

Best for:

- Teams building AI products in AWS

- Workflows that require tool calling, plus RAG, plus strong controls

- Scaling from prototype to production with security posture and ops tooling

Why it’s advanced in early 2026: AWS recently launched AgentCore to accelerate AI development, offering managed memory for personalized conversations and sandboxed code execution.

A critical feature is “Policy in Amazon Bedrock”. This allows users to set boundaries for system actions. For example, a policy can “Block all refunds greater than $1,000.”

AgentCore is ideal for organizations seeking to reduce operational overhead. It handles infrastructure, security, and scaling automatically. Developers can focus on building unique capabilities for their models.

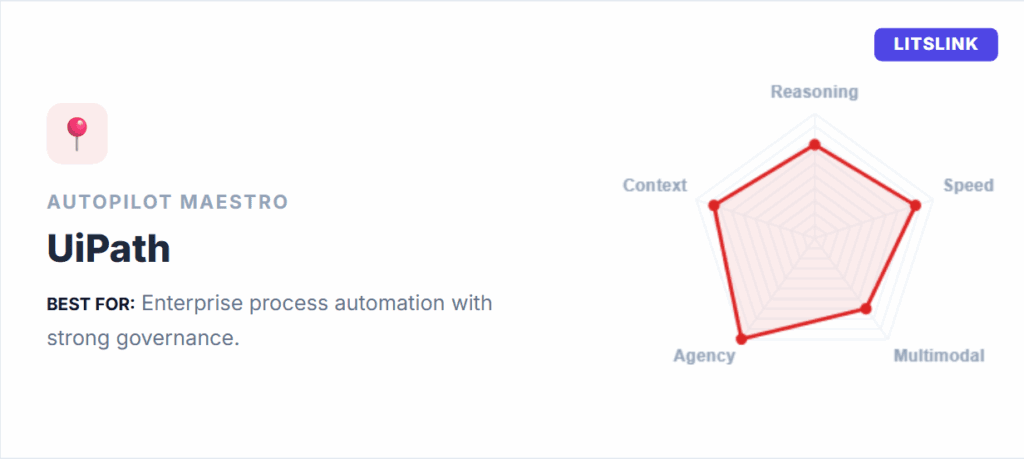

13) UiPath – UiPath Autopilot (Agent Builder / Maestro)

What it is: AI-driven automation across business workflows. UiPath has repositioned itself as the foundational orchestration layer.

Best for:

- Enterprise process automation with strong governance needs

- Document-heavy operations like claims, underwriting, onboarding, and procurement

- Orchestrating “AI plus automation plus humans” across end-to-end processes

Why it’s advanced in early 2026: Their Autopilot platform mixes artificial intelligence with RPA building blocks. The company’s focus is on “governed autonomy” for 2026. This ensures decisions stay transparent and compliant with policies.

UiPath introduced “ScreenPlay” for natural language UI automation. It understands intent and autonomously executes multi-step plans. The company acquired WorkFusion for industry-specific AI, pre-trained for financial crime compliance.

It can manage systems from Microsoft, Google, and OpenAI together. This cross-ecosystem orchestration is a major trend for 2026. UiPath also introduced “Agentic Testing” to repair broken tests instantly. This ensures that enterprise automation remains reliable at scale.

Honorable Mentions: Two Models Worth Watching

One name keeps coming up in the conversations our engineers are having right now. Neither made the main list – not because it’s unimpressive, but because the jury is still out on enterprise-scale reliability. That’s a very different thing from being bad.

14) DeepSeek – DeepSeek V4

This one has the AI community paying close attention for a specific reason: if the internal test results hold up publicly, V4 could outperform Claude and ChatGPT on long-context coding tasks – as an open-weight model. Think about what that means for teams running high-volume inference who currently treat frontier API pricing as a fixed cost of doing business.

The architecture behind it is genuinely interesting. V4 is built around DeepSeek’s sparsity-first design philosophy (the same approach that made V3 and R1 far cheaper to run than their benchmark peers). This time, it adds native multimodality (text, image, video), a new memory system called Engram that separates static factual recall from active neural reasoning, and hardware optimization built around Nvidia’s Blackwell GPUs.

The result, on paper, is one of the more efficient paths to top AI model performance anyone has published.

The complication: DeepSeek withheld V4 from US chipmakers during development, routing early access through Huawei and Cambricon instead. That’s not a technical problem – it’s a procurement and geopolitical one. Enterprises operating under US regulatory frameworks will need to think carefully about how they evaluate and deploy it, regardless of what the benchmarks say.

Who Depends on the Future of AI?

It’s easy to think the future belongs to the companies building top AI models. In reality, AI is shaped by everyone in the chain: the people who build it, fund it, deploy it, and live with the results.

1) Builders (startups + enterprises).

Model releases are only the start. The real advantage comes from data pipelines, guardrails, evaluation, and shipping. LITSLINK has seen this up close. Summalegal needs accuracy, transparency, and careful handling of private content, while Horoscope Generation needs speed, customization, and cost control. And TestWiz shows how AI can deliver value when it’s embedded into a clear testing workflow – not just a chatbot.

2) Data owners and domain experts.

The “truth” often lives inside documents and workflows—legal teams, educators, analysts, doctors. Without clean data and clear rules, even the best model becomes an unreliable chatbot.

3) Infrastructure and tool ecosystems.

Cloud platforms, GPUs, and deployment tooling decide what’s practical and affordable.

4) End users and regulators.

Regulators enforce safety and transparency, while users reward utility and trust.

What to Expect from the Industry in 2026?

In 2026, AI is a major force driving global innovation. It is no longer just a trend. Businesses now prioritize artificial intelligence services to automate routine tasks.

Big changes are happening in healthcare, entertainment, and other areas because of rapid progress in large-context language models, multimodal understanding, and computer vision technologies. These applications will enable businesses to automate routine tasks, optimize processes, and make more informed decisions based on the insights generated by AI software tools.

As artificial intelligence systems become more advanced, we’ll also see a greater emphasis on explainability and transparency.

In addition to developing new applications and programs, businesses of all sizes will focus on integrating the principles of responsible AI into their automation strategies. It’s already obvious that the rapid evolution of this technology will require stricter regulations and guidelines. Otherwise, we will not only see more fake cases like the Scarlett Johansson & Kanye West deepfake video, but also total misunderstanding and confusion in workplaces.

Overall, advanced AI for business keeps accelerating. The biggest wins will go to teams who ship useful workflows, not demos.

Conclusion

In 2026, the “world’s most advanced AI” conversation only becomes useful when you translate it into:

- Which field are we solving for?

- What tasks and metrics define success?

- What governance do we need before automation touches real systems?

If you’re building or integrating AI into a real product – customer support automation, internal copilots, agentic coding workflows, or generative media pipelines – LITSLINK can help you go from evaluation to production: architecture, data pipelines, model selection, guardrails, and monitoring.