The era of personalized digital assistants has fully arrived. It’s now possible to create your own intelligent assistant to handle all operations at hand. These are no longer simply futuristic ideas. They’re essential resources for getting things done. Platforms like Siri, ChatGPT, Amazon Alexa, and others have significantly changed how we interact with software.

By 2028, that number will be up to 75% of software engineers – Enza Iannopollo and Rob Preston, Gartner Experts. Developing a custom application will enhance privacy and functional specialization, and give you more overall control of your digital environment.

Learning how to build your own ai assistant is simpler than you might think. You don’t need a PhD in machine learning to start. This guide breaks this process into clear, actionable steps for both work and personal use.

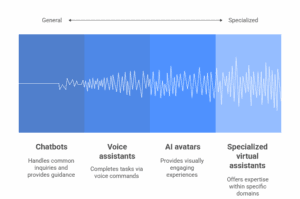

Types of AI Assistants

AI companions (virtual agents/chatbots) are automated programs that help users and provide relevant answers to customer questions. They can carry out simple requests, such as setting alarms or delivering weather updates. They can even be taught some more sophisticated functions, like comprehending natural language. Some use information to learn and improve over time in how they respond.

A good example is machine-learning-based translation utilities such as Google Translate, which enable people to communicate in different languages and are deployed at an enormous scale, with over a billion installs globally.

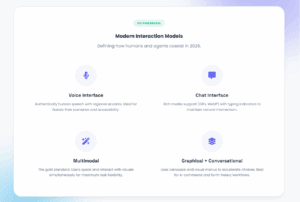

Modern technology has moved far beyond simple “if-then” logic. To build your own AI chatbot or AI assistant today, you must first pick its primary form. That landscape has shifted toward “Agentic” technology – technologies that actually do work.

Your specific goals will determine which tech stack you need. Here is a breakdown of four main categories of virtual companions used today.

Modern Virtual Agents Categories

| Types | Primary Interaction | 2026 Use Case |

| Smart Chatbots | Text/Messaging | 24/7 sales and precise technical support. |

| Speech-driven AI | Natural Talk | Hands-free home control and real-time translation. |

| Digital Avatar | Visual/Video | Interactive brand ambassadors, gaming, customer support, and virtual tutors. |

| Virtual concierge | Action Execution | Booking travel, managing emails, helping with medical diagnoses, or managing financial portfolios. |

Why Create Your Own AI Assistant: Key Benefits

General‑purpose automation tools are powerful, but they are not built around your life, your workflows, or your business. Assistants like Siri or Alexa handle simple tasks well, yet they cannot adapt to specialized needs or understand unique context behind your daily decisions.

Making your own assistant companion changes everything. It gives you control and unlocks new value.

Personalization and Efficiency

A custom AI assistant can learn your routines, understand your preferences, and support your work style. Instead of generic responses, it delivers tailored guidance and automates tasks that consume your time.

A 2026 McKinsey study found that intelligent automation can boost productivity by more than 30%. With a personalized assistant, repetitive tasks — scheduling, reminders, document lookups, routine communication — become effortless, freeing you to focus on higher‑value work.

Custom Solutions and Privacy

Standard platforms often fall short when you need domain‑specific capabilities. Whether you’re managing research, coordinating a smart home, or supporting a specialized industry, a custom assistant can be designed around your exact requirements.

Can you build a fully private system that is actually private? Yes, absolutely. A self-built helper keeps your data on your infrastructure. No third party monetizes your content.

Independence and Long‑Term Growth

Developing your own intelligent assistant also deepens your understanding of modern machine‑learning tools. You gain insight into how these systems operate, how they can be improved, and how they can be adapted to new challenges.

With third-party apps, you are stuck with their limitations. However, your custom assistant gives you total independence. You decide on functionality and rules. A 2024 survey by PwC found 73% of organizations now prefer purpose-built solutions for greater control.

If you’re building your own assistant, start with simple jobs and add more as needed. This guide also shows you how to build a custom personal assistant that runs on your devices without anyone else seeing it.

How to Make Your Own AI Assistant From Scratch

And as the saying goes, “A journey of a thousand miles starts with one step.” In technology terms, this journey comes in the form of ten carefully crafted steps that interact with each other to have full-stack benefits for your visual agent.

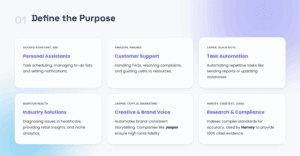

Step 1: Decide the Purpose of Your AI Helper

Before you write any code, be very clear about what you need to solve. What operations will your bot perform? Who is going to use it? How does one define success? Think of this step as building a foundation for everything that follows.

You should decide what your virtual agent will help with before you even develop it.

Key Questions to Define the Use Case

- What specific problems should it solve?

- What tasks will it perform?

- Who is the intended user, and what do they expect from it?

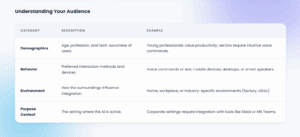

Target Audience

Understanding your audience is crucial for building an effective model. Consider these factors:

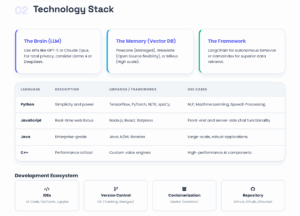

Step 2: Choose the Right Technology Stack

Modern development of intelligent systems rarely requires starting from zero. Powerful pre-built components exist for nearly every layer of your system.

Building everything yourself isn’t the goal. It’s about choosing a right combination of resources that work together seamlessly. This section breaks down essential components you’ll need.

Component 1: Brain (Large Language Model)

Natural Language Processing is the “brain” of your agent. This is the component that understands language and can form replies. Millions of dollars in technology to train an LLM from scratch! Fortunately, you don’t need to.

A simple path involves using engine APIs such as OpenAI’s GPT-5 2 family or Claude Opus 4.6.

However, pro developers often use a model aggregator rather than a direct connection. This adds a layer of resilience; if one fails, you switch instantly. It also slashes latency and simplifies procurement with local-currency billing. Check out n1n.ai for enterprise stability or OpenRouter for flexible experimentation.

Do you need total intelligence privacy for your project? Then use open-source frameworks. Meta’s Llama 4 and DeepSeek run entirely on your personal servers. Hugging Face remains the easiest way to find and deploy these tools.

Component 2: Memory (Vector Database)

To create an AI assistant that knows your specific content, you need vector databases. These specialized catalogs store information as mathematical vectors. This enables semantic search – finding information based on meaning, not just keywords.

Pinecone offers fully managed vector search as a service. It scales automatically and requires minimal setup. Weaviate provides open-source flexibility with strong performance. Milvus excels at handling massive scale.

Vector storages enable Retrieval Augmented Generation (RAG). Your system searches your documents instantly. It finds relevant context even when users phrase questions differently. It combines this retrieved information with the LLM’s reasoning to generate accurate, grounded responses.

Without RAG, LLMs may hallucinate facts. With RAG, they cite specific sources from your documents.

Component 3: Framework (Orchestration Layer)

Orchestration frameworks glue your Brain and Memory together. They manage a complex flow of information. They handle retrieval, context assembly, prompt construction, and response generation.

LangChain is a top orchestration framework. It provides chains for common patterns, agents for autonomous behavior, and extensive integrations. It simplifies complex workflows into manageable components.

LlamaIndex specializes in content ingestion and retrieval. It excels at connecting LLMs to your existing content sources. It handles document parsing, chunking, and indexing automatically.

These frameworks dramatically accelerate development. What might take weeks of custom coding becomes hours of configuration. They embody best practices learned from thousands of intelligent applications.

Component 4: Choose a Programming Language

The choice of programming language depends on your project’s requirements and your expertise.

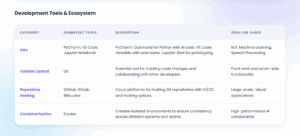

Component 5: Install Development Tools

Set up tools to write, test, and debug your code.

Step 3: Collect and Prepare Data

Content fuels every modern system, so use quality input. The collection involves public and internal sources. Synthetic generation is also common now. Focus on high-quality curation today, as raw volume is no longer sufficient. Poor facts leads to bias. It also causes inaccurate outcomes.

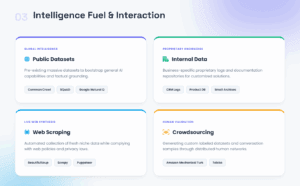

Sources of Data Collection

Intelligence can be collected from multiple sources depending on your virtual agent’s purpose.

Quality control is essential now. Pandas and OpenRefine are go-to resources for cleaning records, while Label Studio handles user intent assignment. Proactively identify and remove bias. Ensure datasets have diverse perspectives – otherwise, speech recognition will fail for underrepresented groups. Ethical collection isn’t optional either; it must follow applicable laws, including the requirements set out in the EU AI Act.

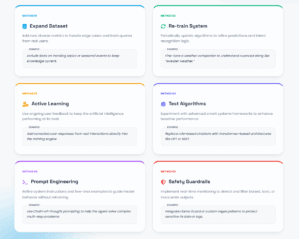

Step 4: Training Your AI Companion

For most people building these AI systems, “training” really entails a choice: RAG or fine-tuning. Each one has some benefits over the other, depending on specific situations.

The Modern Standard: Retrieval-Augmented Generation (RAG)

RAG is used in leading approaches for most applications. You don’t just force-feed the system’s brain with data; you give it an open-book test. You can map your data to a dense vector index. When the user asks a question, relevant chunks are retrieved by the system.

It feeds these chunks to the LLM as context. This is how to make an AI assistant that is accurate and verifiable.

Why RAG Wins:

- Zero Training Costs: No expensive GPU runs are required.

- Instant Updates: Just add new documents to your index.

- Citations: The helper can reference specific sources naturally.

- Fact-Grounded: It reduces hallucinations by anchoring answers in real documents.

RAG works best for knowledge-heavy domains. Legal advisors can cite statutes. Technical support bots can reference documentation. Frameworks like LangChain and LlamaIndex handle the heavy lifting automatically.

The Specialist Path: Fine-Tuning

Fine-tuning works by adjusting the real-valued weights of the network. It directly incorporates the knowledge into the neural network. This is perfect for when you’re looking to make your own AI assistant with a distinctive personality or style.

When to Fine-Tune:

- Style Mimicry: You need the Artificial Intelligence to speak in a specific brand voice.

- Complex Formats: The output must follow strict JSON or code structures.

- Niche Jargon: You are teaching it medical or legal terminology.

The “Training” Process

If you choose to fine-tune or build a custom algorithm, follow this rigorous cycle.

1. Split the Information: Divide your dataset strictly. Use 70% for training, 20% for validation, and 10% for final testing.

2. Initialize: Load your pre-processed materials. Start with adjustable hyperparameters like learning rate and batch size.

3. Iterate: Evaluate performance on validation results. If it fails, tweak the architecture and repeat.

4. Address Bias: Watch for class imbalance. If certain intents are rare, the LLM will ignore them. Oversample these minority classes to fix it.

To verify your system works, track the right metrics. Start with Accuracy to see the simple percentage of correct predictions.

For a deeper analysis, look at the F1 Score. This provides a balanced view of precision and recall. If you create your own AI assistant for speech, Word Error Rate (WER) is crucial. Finally, use BLEU to judge the quality of the generated text against reference answers. These numbers tell you exactly where to tune your setup.

Step 5: Design the User Interface

The user interface (UI) is the linchpin of your AI helper’s effectiveness, serving as the control center that ensures a smooth user experience. Consider all possible user inputs. Determine appropriate responses for each. Simplicity is a paramount principle. When designing the UI, take into account the following considerations:

- Conversational Flow: Map the user’s journey visually. Design a flowchart for every input and response. This ensures interactions feel natural.

- User Experience: Prioritize intuition. A seamless, user-friendly interface is the goal. This drives engagement and keeps users satisfied.

Define the model of interaction

Focus on core usability principles

Make it easy: Cluttered interfaces overwhelm users – and Artificial Intelligence. Every single player has to justify their spot. Google’s minimalist philosophy achieves this and allows the LLM to concentrate on what is important.

Consistency is key: Your AI helper should feel like one product, not a Frankenstein monster made up of parts. Same colors, same tone, and the same interaction patterns on web, mobile, and voice. A banking bot shouldn’t sound chipper on the app and mechanical on the website.

Show you’re working: Show you’re working: Users want to feel that they are being taken care of. That typing indicator? It’s not just decoration – it’s a psychological cue that reads “I’m on it.” Also, mix with discreet progress indicators for multi-step processes.

Design for everyone: Screen reader support, voice-to-text alternatives, and high-contrast options aren’t add-ons – they’re must-haves. Apple’s VoiceOver remains the gold standard for a reason.

Your 2026 toolkit for UI/UX

Prototyping and Design:

- Figma: Real-time interface design for web and mobile apps.

- Adobe XD: A user-friendly resource for prototyping and wireframing.

- Sketch: Another strong app for designing interfaces.

Testing and Feedback:

- UserTesting: Get your user’s perspective.

- Hotjar: See how your visitors are really using your website with heatmaps and videos.

UI Frameworks for Implementation:

- Botpress: To develop chatbots very quickly with in-built UI tools.

- Dialogflow: Comes with a flexible chat interface for text and voice bots.

- Microsoft Bot Framework: Offer templating for creating conversational UIs.

Iterate and Improve

1.A/B Testing: Experiment with different wording, button placements, and response types. Let facts decide.

2. Listen to user feedback: Consistently gather feedback to improve the agent’s user interface.

3. Monitor KPIs: Use the dashboard to monitor important key performance indicators (KPIs), such as user engagement rate, drop-off ratio, and session length, to assess the effectiveness of your UI.

Step 6: Test, Deploy, and Monitor

This phase separates successful deep learning companions from abandoned experiments. Many step-by-step guides on how to create an AI assistant skip this crucial section. Don’t make that mistake.

Setting Validation Goals

First, you need clear targets. Black box validation of doing the job properly. If you’re building a personal chatbot to handle your calendar, it better be able to understand dates and times implicitly without double booking one another. You should also consider the user experience (UX). You should get prompt, helpful answers that use plain English instead of arcane technical jargon.

A large part of this is handling errors. So your Artificial Intelligence engine shouldn’t just die when it gets a weird request. But it should fail “gracefully” — rely on a polite request for clarification or broaching of another solution, all while you keep the conversation pointed in the right direction.

Levels of Testing

To keep things organized, break your testing into layers.

Unit tests check the smallest bits of code. Integration tests are even more vital if your platform integrates with external applications such as Google Calendar or a company’s CRM.

Fixing Common Bugs

You’ll likely run into issues with intent recognition. This usually happens when your training data isn’t diverse enough. To fix this, expand your training set with more synonyms and “edge cases.” For example, “What’s the weather like?” and “Is it raining?” should both trigger the same weather function.

Latency is another hurdle. In the world of LLMs, the “Time to First Token” (TTFT) makes or breaks the experience. You can speed things up by optimizing your code, using smaller variants (quantization), or caching answers for common questions.

Automating these tests is the only way to scale. Tools like Appium help test mobile interactions, while Botium is built specifically to hammer conversational flows with thousands of queries in minutes.

Deployment Platforms and Release Plans

Once you’ve verified the product, it’s time to go live. Your choice of platform dictates how you reach your audience. In 2026, you have options ranging from web widgets to deep integrations with smart homes.

Choosing Your Environment

| Environment | Tech Stack | Best Use Case |

| Web Apps | Dialogflow, Tidio, Zendesk Chat | E-commerce support |

| Mobile Apps | Flutter, React Native, SDKs | Personal health trackers |

| Smart Devices | AWS IoT, Alexa Skills Kit | Home automation |

| Messengers | BotPress, Twilio, MS Bot Framework | WhatsApp or Slack for business |

| Voice Interfaces | In-car systems, Smart TVs | Hands-free navigation |

Web deployment is fast and easy with JS widgets. But if you want to create AI assistant tools with true personalization, mobile apps provide better access to device sensors and notifications. Integrating with Slack or Teams can turn your agent into a “digital employee” that automates internal office work.

Learn how smart devices are transforming healthcare in our article.

Modern Release Strategies:

- Blue-Green Deployment: Run two identical setups. One is live (Blue), and one has the new update (Green). You switch traffic to Green only when you’re sure it works. If anything breaks, you switch back to Blue instantly.

- Canary Deployment: Send the update to just 5% of your users first. If the metrics look good, roll it out to everyone else.

- Shadow Deployment: The new version runs in the background. It sees real traffic and generates answers, but the users don’t see them. You use this to compare the new engine’s accuracy against the old one without risking your reputation.

Real-Time Monitoring and Observability

The work doesn’t stop at launch. The smart system is unpredictable, so you have to watch it constantly. Modern observability focuses on the “semantic” quality of answers—are they actually good, or just well-formatted?

To know if your helper is winning, track these metrics:

- Task Completion Rate (TCR): How often did the user actually get what they came for?

- User Retention: Do people come back, or do they quit after one try?

- Token Usage and Cost: Monitor your bills. Calculate it like this: Total Cost = (Input Tokens x Ratein}) + (Output Tokens x Rateout).

- Sentiment Analysis: Use Artificial Intelligence to scan the user’s tone. Are they getting frustrated?

One of the biggest risks when you create an AI personal assistant is “hallucinations” – where the LLM makes things up with total confidence. Use “grounding” techniques to compare the LLM’s output against a trusted knowledge base.

You also need to watch for “model drift.” This happens when the AI’s behavior changes over time because of provider updates or shifts in how users talk.

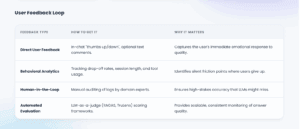

Feedback Loops and Continuous Growth

Your virtual agent should evolve. Collecting feedback helps the system adapt to new user needs.

“Human-in-the-Loop” (HITL) is the gold standard. In this setup, human experts review risky or low-confidence responses before the user sees them. This doesn’t just prevent errors; it creates a “golden dataset” for future training and optimization.

By 2026, the gap between a basic bot and a professional assistant is all about how you handle this final step. It’s about building a engine that doesn’t just talk, but works reliably, learns from its mistakes, and protects your user profile every single day.

Analyze and Enhance the LLMs

Practical Experience from LITSLINK

Two real-world examples illustrate what “works in production”:

- QueryMinds is an LLM-powered chatbot that integrates a company’s knowledge base and external sources with an emphasis on privacy and accurate responses. The published tech stack includes Python, Flask, Llama 2, and LangChain. It uses chunking + vector database semantic search, plus shows source buttons with responses.

- Acensify anomaly detection is a virtual helper that analyzes user input and detects anomalies using other ERP records, aiming to reduce input errors and correction effort. It includes a PoC smart solution with rule logic for special columns and fine-tuning coefficients, plus a custom unsupervised learning algorithm applied to tabular information.

Final thoughts

AI companions will not be the sparkling answer to everything. But when they are designed with a real sense of purpose and an understanding of what the user needs, these apps have actually changed how work is done. That is the difference between a tool that sits idle and one that people cannot envision being without.

Three important things to keep in mind as you proceed:

- Specificity wins: a specialised, well-trained helper will always beat a broad but muddled one.

- Focus on detail quality over LLM size: Good, clean, relatable material wins over a beast of an engine with noise fed to it.

- Deployment is not the be-all and end-all: The real work starts when users begin interacting with this system.

If you’re looking for a partner to help you create your own AI assistant — one that’s production-ready, not just proof-of-concept – Litslink brings hands-on experience building exactly that.